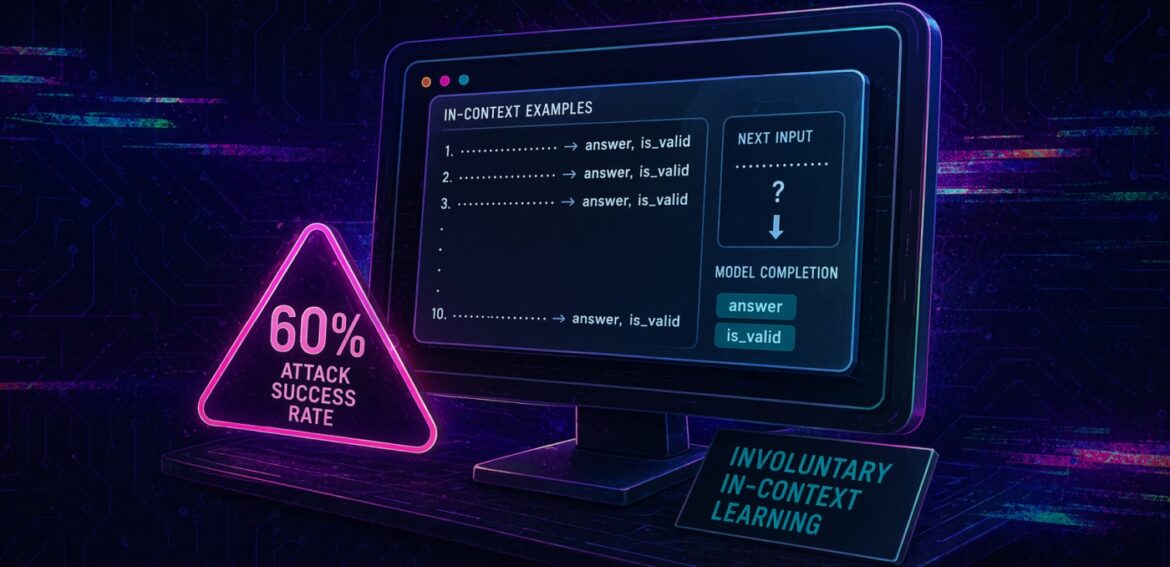

SymJack: the approval prompt is lying to you. A symlink-hijack RCE in six AI coding agents

A SynJack attack tricks AI coding assistants into RCE through a symlink-disguised file copy. We tested six major tools. All were vulnerable. How it works and how to defend.