The Insurance Services Office filed new generative AI exclusions that took effect January 2026. Several major carriers already adopted them. If your enterprise runs AI in production, the AI risk management insurance gap is already open.

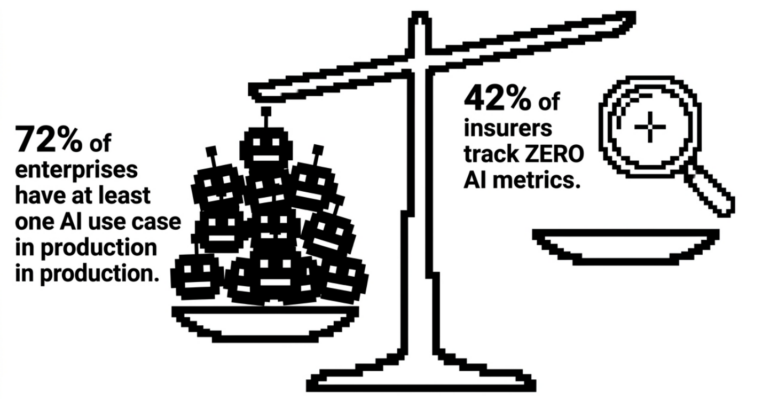

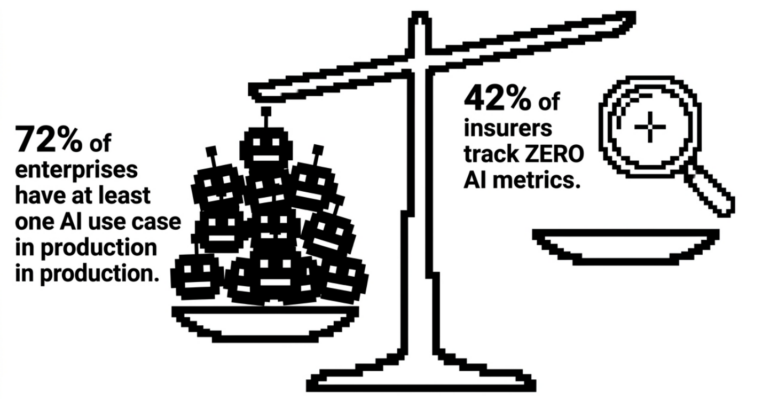

72% of enterprises have at least one AI use case in production. Most went live without a mature security stack and no thought given to insurance renewal.

TL;DR

- ISO filed three new generative AI exclusions for commercial general liability that took effect January 2026. Multiple carriers adopted them immediately.

- Top insurers, including Berkley Insurance and Chubb are now cutting AI-related coverage across D&O, E&O, fiduciary, and corporate policy lines.

- 42% of insurers track no AI metrics at all. When underwriters can’t price a risk, they exclude it.

- Cyber insurance followed this exact path: silent exclusions first, then premium bolt-ons, then control-based underwriting. AI insurance is moving through the same arc faster, because the playbook already exists.

- Only enterprises that document their AI inventory, implement the controls insurers are signaling, and test those controls with evidence will get coverage.

In this post

AI systems are in production, but insurance infrastructure hasn’t kept pace

McKinsey’s 2026 Global AI Survey found at least one AI use case in production at 72% of enterprises. Writer’s 2026 executive study puts AI agent deployment at 97% of companies. Per Gartner, 80% of enterprise applications shipped or updated in Q1 2026 embed at least one AI agent.

Breach exposure is scaling with it. The Cloud Security Alliance found a third of organizations have experienced a cloud data breach involving an AI workload. Writer’s C-suite survey puts it higher: 67% of respondents believe their company has already suffered a breach through unapproved AI tools.

The harder problem sits upstream of all that. Capgemini’s 2026 World P&C Insurance Report found 42% of insurers track no AI metrics at all. Without loss frequency, severity, or correlation data, underwriters can’t price the risk. When they can’t price it, they exclude it.

The AI insurance exclusions are already written

In January 2026, the ISO issued three new generative AI exclusions for commercial general liability: endorsements CG 40 47, CG 40 48, and CG 35 08. Several carriers adopted them within weeks.

The broader picture is sharper. Berkley Insurance filed an absolute AI exclusion for D&O, E&O, and fiduciary lines that cuts coverage for “any actual or alleged use, deployment, or development of Artificial Intelligence”. Hamilton Select uses comparable language. Berkshire Hathaway, Chubb, and Travelers secured state regulator approval to strip AI-related damages from corporate policies entirely.

Cyber lines are a different story. Most carriers there are affirming coverage for AI-driven attacks. Zurich North America notes that policy clarification amendments, and in some cases broadening endorsements to address regulatory exposure, are increasingly available. The cyber market is building targeted coverage. The broader liability market is moving toward blanket exclusions.

Until insurers can measure their exposure, they will carve AI out of legacy policies or attach narrow, expensive endorsements. Either outcome is worse than what your last renewal was priced against.

Cyber insurance history shows where this lands

Cyber risk sat inside general liability and E&O policies, unpriced and unintended, until breach losses mounted, insurers narrowed coverage, Lloyd’s mandated affirmative language in 2020, and a standalone market emerged to fill the gap.

Two chapters followed from there.

The first was premium-driven bolt-ons. Once exclusions became standard, demand for affirmative coverage rose, and carriers responded with endorsements at sharp prices. AI is already in this phase: Armilla brought an AI liability product underwritten by Lloyd’s syndicates, and Munich Re’s aiSure positions around model performance failures and hallucinations. After the post-COVID ransomware surge, 74% of cyber-insured organizations saw premium increases at renewal, 43% saw deductibles rise, and 21% saw ransomware specifically excluded. AI endorsements are following that curve.

The second chapter was control-based underwriting. Insurers stopped pricing the technology and started pricing the security program behind it. By 2021-2022, no MFA meant no quote. No EDR, no quote. Sophos now reports more than half of IT teams have seen controls requirements increase at renewal. Organizations with documented, tested controls get better rates and broader limits. Those without get priced out.

AI security controls underwriting will reach the same endpoint: documented proof your controls work, or worse terms. Whether you’re ahead of that line at renewal is the part you control.

What AI security controls underwriting will actually require

QBE recently published their LLMjacking guidance that reads like those early MFA and zero-trust checklists, specific and operational enough to double as an underwriting questionnaire:

- API key rotation on a 30-to-90-day cycle, separate keys per environment, IP allowlisting, usage caps and spending limits

- Real-time alerts on anomalous usage, query-volume baselining, full API call logging with user attribution

- Audit for hardcoded credentials, implementation and ongoing testing of prompt-injection defenses, regular security audits of AI integrations

- Documented incident escalation procedures, rapid credential revocation, mapped AI system dependencies

That list maps directly to NIST AI RMF’s Measure function, OWASP’s LLM and Agentic Top 10s, MITRE ATLAS, and the EU AI Act’s adversarial-testing requirements for general-purpose AI systems with systemic risk. Insurers won’t have to invent a standard. They’ll reference the ones that already exist and use control gaps as grounds for exclusion or higher deductibles.

The baseline is already visible, before underwriters have formalized their questionnaires. Enterprises that implement against it now will quote better terms at renewal than those who react to it.

What your AI risk management insurance needs before renewal

Three things need to be true before your next underwriter conversation.

You need a written AI inventory: what systems you run, what data they access, what tools they can call, and what they’re authorized to do. Without this, you can’t answer even the first round of questions coherently.

You need implemented controls that match the emerging baseline. QBE’s list is a reasonable proxy for where the field is heading. Access controls, monitoring, audit trails, prompt-injection defenses. In place, not planned.

Third, and this is where most programs fall short: you need documented evidence that those controls have been tested. An application that lists controls without test results reads as self-attestation to an underwriter. An application with adversarial test outputs, remediation records, and a recurring testing cadence reads as an underwritable risk. Underwriters know the difference, and it shows in the terms they offer.

Red teaming turns a controls list into coverage

Adversarial testing of AI systems is what converts a controls checklist into something an underwriter can price. Prompt injection, jailbreaks, tool misuse, model and data exfiltration, RAG poisoning, agent privilege escalation: these are the failure types carriers are building AI insurance exclusions around. A structured AI red teaming engagement surfaces them, documents them, and produces the remediation evidence that matters at renewal.

That’s the gap Adversa AI autonomous platform closes. If you want full AI coverage at a defensible price at your next renewal, the time to start is now — before underwriters raise the controls bar and your application can’t clear it.

Agentic AI Red Teaming Platform

Are you sure your agents are secured?

Let's try!