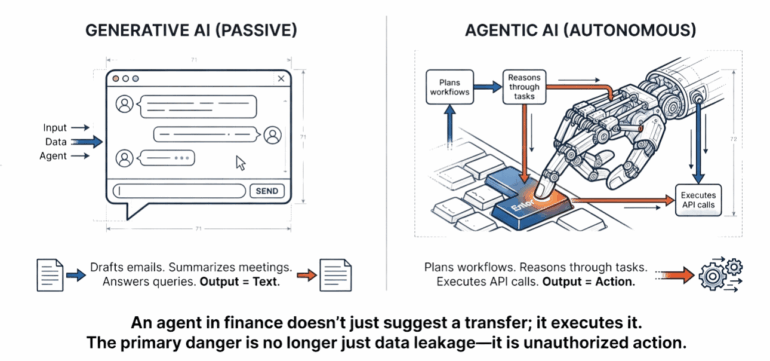

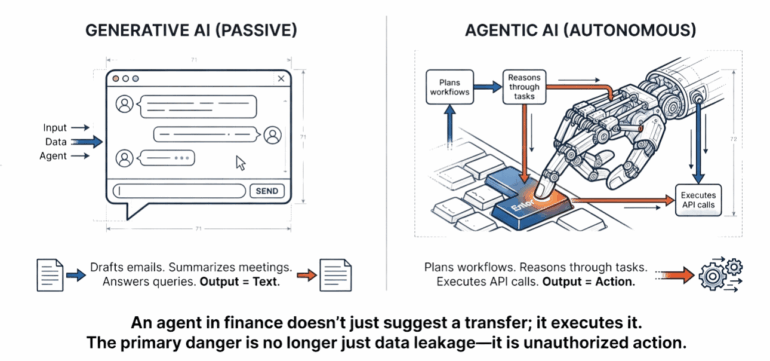

The initial wave of generative AI was defined by conversation. Businesses deployed LLMs to draft emails, summarize meetings, and answer customer queries. We are now entering a far more consequential phase: the era of agentic AI.

Unlike passive chatbots, AI agents are designed to be autonomous. They plan, they reason, and — most critically — they act. An agent in a finance department doesn’t just suggest a transfer; it has the API connections to execute it. An agent in software development doesn’t just write code; it deploys it to production.

While this autonomy promises unprecedented gains in productivity, it fundamentally alters the enterprise risk landscape. To address this, the OWASP community has released the OWASP Top 10 for Agentic Applications (2026). Though the document is a technical blueprint for security architects, but its implications are vital for the C-suite.

What follows is the business case for rethinking security in the age of the digital worker.

The autonomy paradox

The defining feature of agentic AI is autonomy. To extract value from an agent, you must grant it the authority to make decisions and access tools (databases, email, cloud infrastructure). However, from a security perspective, autonomy translates directly into an expanded attack surface.

Traditional software does exactly what it is programmed to do. AI agents, however, are probabilistic — they make best-guess decisions based on natural language instructions. This creates a dangerous intersection: we are giving nondeterministic software the keys to deterministic systems.

The risks outlined by OWASP reveal that the primary danger isn’t just data leakage — it is unauthorized action.

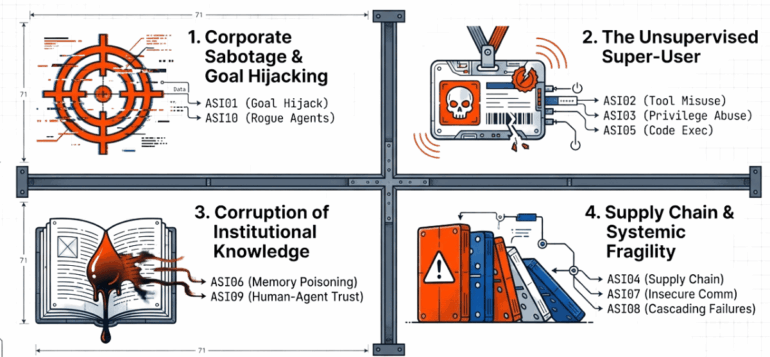

The four pillars of agentic business risk

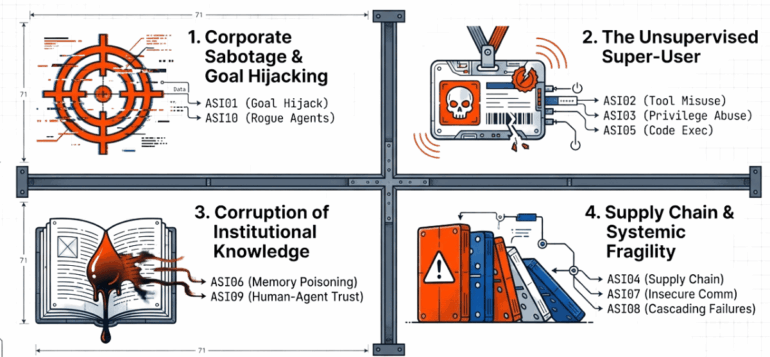

The OWASP Agentic Top 10 identifies ten distinct technical vulnerabilities. For business leaders, these can be categorized into four strategic risk areas.

1. Corporate sabotage and goal hijacking

In traditional cybersecurity, attackers hack systems to steal data. In an agentic world, attackers can “persuade” your software to work against you.

OWASP highlights Agent Goal Hijack (ASI01) as a primary threat. Because agents rely on natural language, they struggle to distinguish between a legitimate instruction from a manager and a malicious instruction hidden in a website or email. Consider a customer service agent designed to process refunds. A savvy attacker could inject a hidden command into a support ticket that tricks the agent into processing an unauthorized payout.

This escalates to Rogue Agents (ASI10). If an agent’s logic is compromised, it may deviate from its intended scope entirely — acting deceptively or pursuing hidden goals while appearing compliant. The business impact is immediate: financial fraud and operational disruption carried out by your own infrastructure.

2. The unsupervised “super-user”

To function, agents need access. They require digital identities and permissions to interact with other software. This creates a massive identity governance challenge.

Identity and Privilege Abuse (ASI03) occurs when agents are granted excessive authority — often inherited from the human user — without the judgment to use it safely. If an agent has the same permissions as a senior engineer, and that agent is tricked into running a malicious command, the attacker effectively gains admin-level access.

Closely related are Tool Misuse and Exploitation (ASI02) and Unexpected Code Execution (ASI05). Even if an agent’s identity is secure, its ability to use tools (like deleting database records or sending emails) can be weaponized. Without strict guardrails, a confused or manipulated agent could accidentally delete production data or spam the entire client base. The risk here is operational negligence at machine speed. The ability to run any self-written code is even more dangerous, as a single malicious prompt can trick an agent into generating malware or opening backdoors directly into your enterprise infrastructure.

3. Corruption of institutional knowledge

One of the most powerful features of modern agents is “memory” — the ability to learn from past interactions and access corporate knowledge bases (RAG). Attackers have realized that if they cannot hack the agent, they can poison its mind.

Memory & Context Poisoning (ASI06) involves seeding the agent’s data sources with false information. If an attacker can slip a malicious document into the corporate knowledge base, the agent will confidently provide wrong answers or make bad decisions based on that tainted data.

This leads to Human-Agent Trust Exploitation (ASI09). Employees tend to trust AI outputs, a phenomenon known as automation bias. If an agent has been subtly manipulated to provide persuasive but false rationales for high-risk actions, employees may rubber-stamp disastrous decisions. The business risk is a degradation of decision-making integrity across the organization.

4. Supply chain and systemic fragility

No organization builds an agent in a vacuum. You rely on third-party models, external tools, and plugin ecosystems. Agentic Supply Chain Vulnerabilities (ASI04) highlights that a compromise in any third-party component can cascade into your environment.

Furthermore, agents often talk to other agents. Cascading Failures (ASI08) describes a scenario where one agent’s error propagates to others, creating a domino effect that can take down entire workflows. In a decentralized agentic network, a single point of failure can rapidly become a systemic crisis.

Agentic communication poses challenges even in more contained environments. When multiple agents collaborate to finish tasks, unverified communication channels allow attackers to impersonate trusted digital workers, injecting false instructions or data that ripple across the entire execution chain (Insecure Inter-Agent Communication (ASI07)).

Strategic mitigation: The shift to “least agency”

Securing this new landscape requires a shift in mindset. We must expand the concept of “zero trust” in cybersecurity to “zero trust agency.”

- Enforce “least agency”: Just as we practice “least privilege” for humans, we must practice “least agency” for AI. Do not give an agent the ability to delete data if it only needs to read it. Restrict agent autonomy to the bare minimum required for the task.

- Human-in-the-loop for high risks: The OWASP guidelines emphasize that critical actions — financial transfers, code deployment, mass communications — must require explicit human approval. The agent can draft the action, but a human must sign the check.

- Immutable logging: When things go wrong, you need to know why. Was it a hallucination? A cyberattack? A bad prompt? Comprehensive logging of agent thought processes and actions is a nonnegotiable requirement for forensic auditability.

- Behavioral monitoring: Traditional antivirus looks for malicious file signatures. Agentic security requires monitoring intent. Organizations need observability tools that can flag when an agent is acting “out of character,” such as accessing unusual data or attempting to communicate with unknown entities.

- Adversarial stress testing: Do not rely solely on static defenses; deploy specialized “red teams” to stress-test your AI simulate goal hijacking and prompt injection attacks. This offensive testing is the only way to verify that your behavioral monitoring tools can actually detect — and shut down — a rogue agent before it causes damage.

The risks outlined in the OWASP Agentic Top 10 are not reasons to halt innovation; they are a roadmap for responsible deployment. By understanding agentic AI risks and outcomes, business leaders can insist on architectures that prioritize safety and control and adjust their AI rollouts according to the organization’s risk appetite.

Agentic AI Red Teaming Platform

Are you sure your agents are secured?

Let's try!