Platform

Secure the AI agents you build.

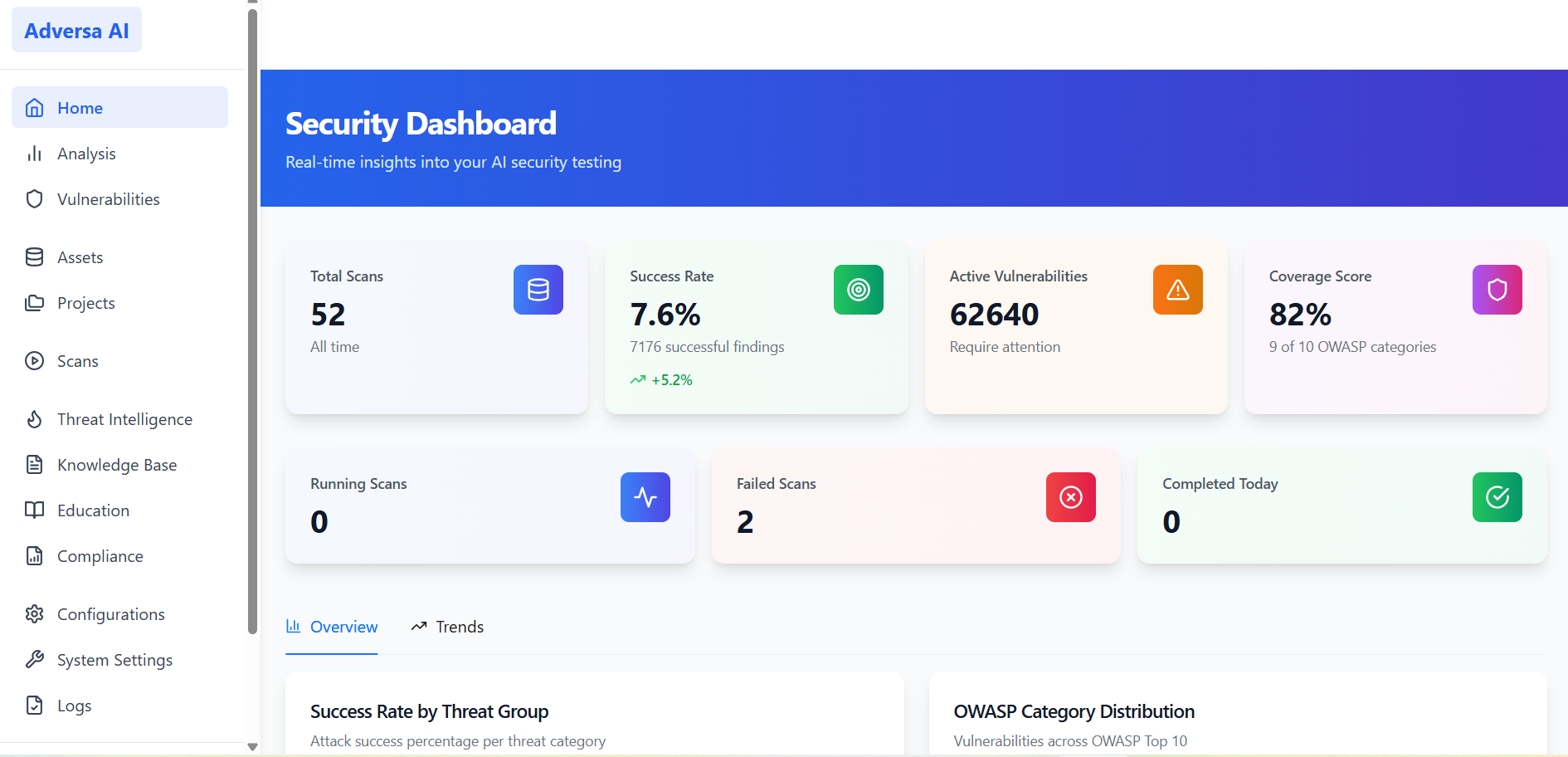

Most AI security tools are designed for off-the-shelf chatbots. Adversa AI platform is engineered for your proprietary agents. We deliver continuous red teaming and security for the AI that runs your core business. Discover complex vulnerabilities, map your business risk, and get actionable remediation playbooks in real time.

The Challenge

You’ve secured your AI.

But do you test it continuously?

You’ve deployed the AI firewall and ran pentests. But in an ecosystem where models drift, AI agents evolve, and attackers use AI to bypass rules and invent new methods within hours, “set and forget” security is a liability.

Guardrails —

necessary, not sufficient

Firewalls and guardrails rely on known techniques and one-step attacks. But creative tailored probabilistic attacks, tool abuse, and jailbreak variations bypass those filters every day.

Red team assessments —

valuable, not viable long-term

You ran a pentest or brought in consultants and spent a significant budget. But that was a snapshot of a moving target. An AI agent is a live, evolving system. Underlying models change without notice, new tools get connected, prompts get tuned. Each change resets your risk posture.

DIY / Open Source —

possible, not scalable

Agentic AI security requires expertise that blends offensive security, ML internals, and business-logic reasoning. The investment in staffing and continuous research quickly exceeds the cost of a purpose-built platform.

What Adversa AI is

An autonomous red teaming platform for AI

Adversa AI continuously validates that your AI agents behave correctly in your specific business context — across every stack layer, from models and agentic cognition to application APIs and infrastructure including MCP.

Your guardrails stop the obvious. We find the invisible. Our engine invents novel vulnerabilities using its own on-prem AI models — not relying on external providers — then prioritizes every finding by real business impact and delivers remediation your teams can act on. What used to be a one-time, six-figure engagement is now a continuously operating product.

01

02

03

04

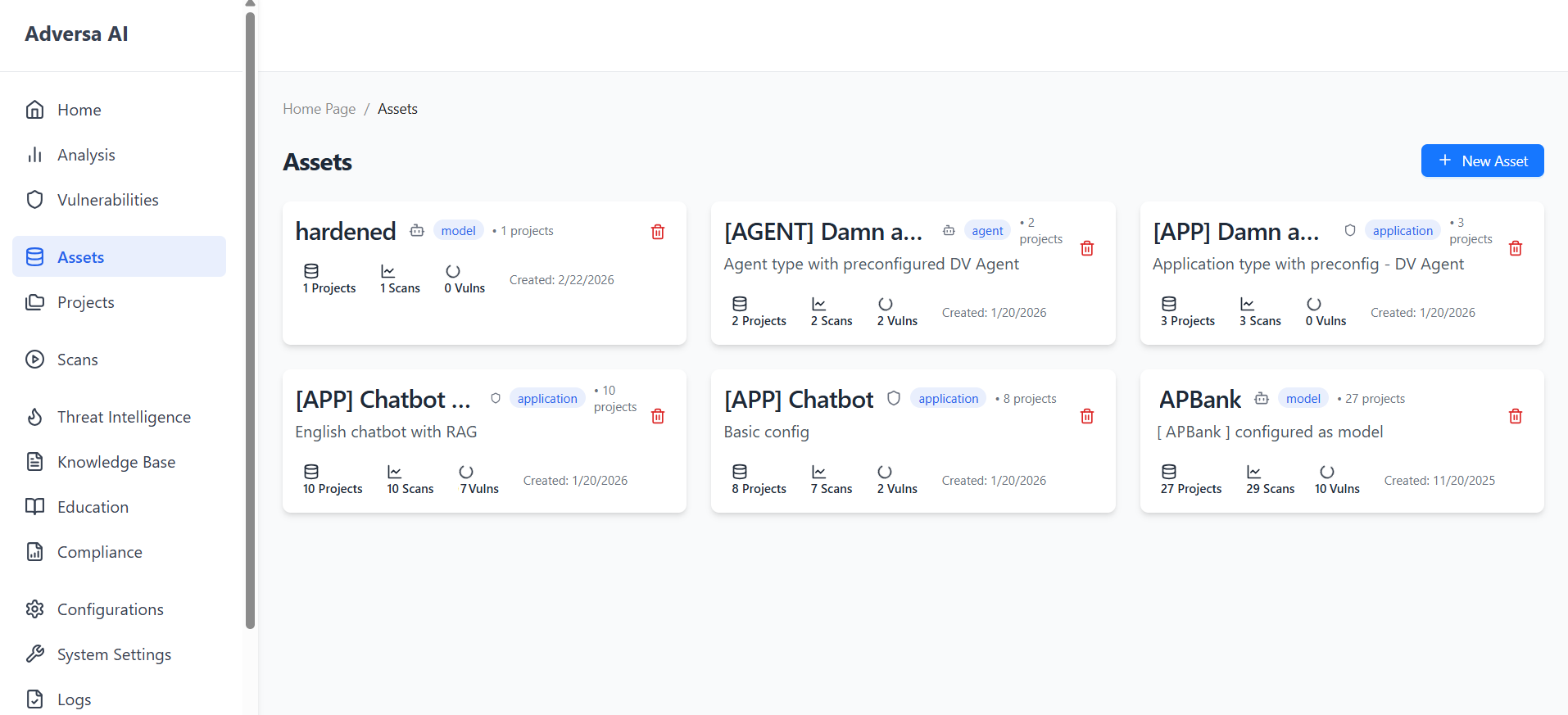

Full AI Stack Coverage

Model. Application. Agent. MCP.

All covered.

Connect any AI system as an asset and start testing within minutes.

01

Model layer of your agent (direct API)

You didn’t build the LLM (OpenAI, Claude, Llama), but you are responsible for how it behaves. We test the foundation model specifically within the context of your application to prevent jailbreaks, data exfiltration, or context poisoning.

02

Application layer (web app)

Your custom-built AI portal, copilot UI, or internal tool — tested end-to-end against OWASP Top 10 for GenAI, with attacks adapted to your stack.

03

Agent (autonomous AI)

LangChain, AutoGPT, custom frameworks. Tool misuse, goal manipulation, inter-agent attacks, and everything from OWASP Top 10 for Agentic AI.

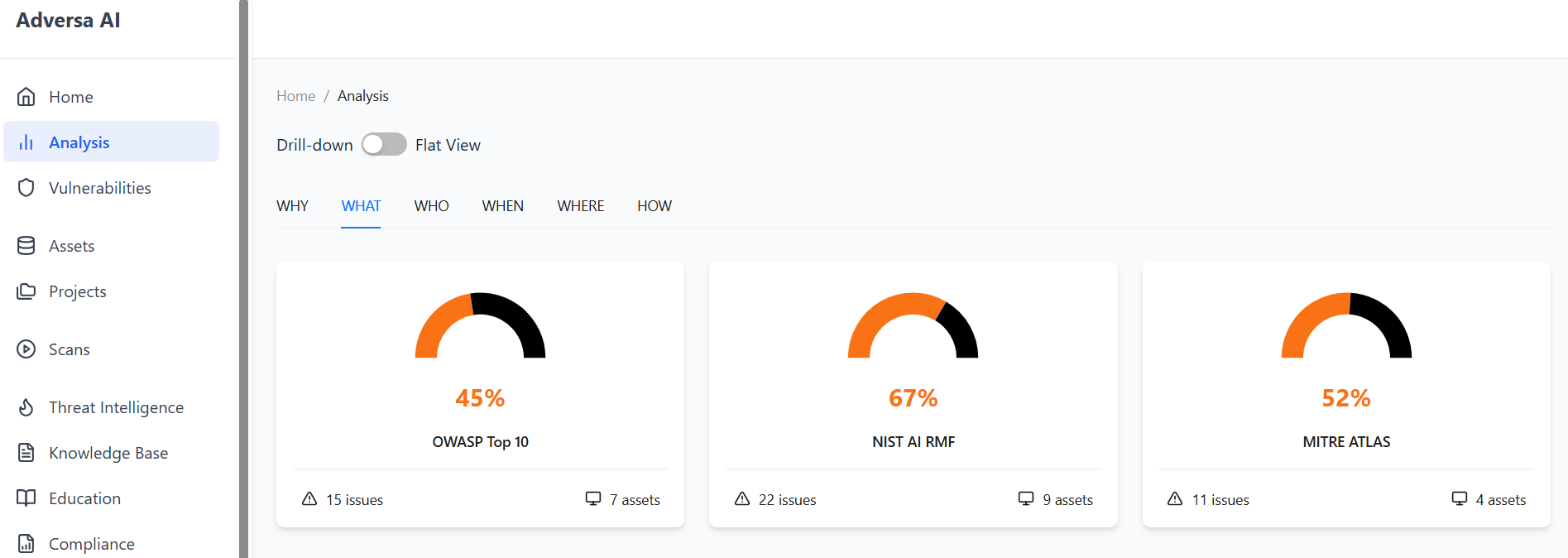

360° Threat Model

A complete, tailored threat model

Every assessment starts from a structured threat model that maps test objectives, attacker types, attack depth, input modalities, and outcomes — so results are relevant to your risk posture.

Security

Prompt injection, data leakage, insecure output and so on. Tests mapped to OWASP Top 10 and MITRE ATLAS.

Safety

Harmful outputs, misinformation, bias, restricted topics, content safety, and more.

Business Risk

Custom scenarios specific to your organization — competitor data protections, industry-specific rules, contractual obligations.

60+ vulnerability categories covering the full spectrum — from prompt injection and data leakage to business risks and compliance violations.

Model-Level

Adversarial prompts, jailbreaks, prompt leakage.

Application

Insecure output, code execution, session exfiltration.

MCP / Supply Chain

Tool misuse, command injection, privilege escalation.

Agentic

Tool-hijack, goal manipulation, inter-agent attacks.

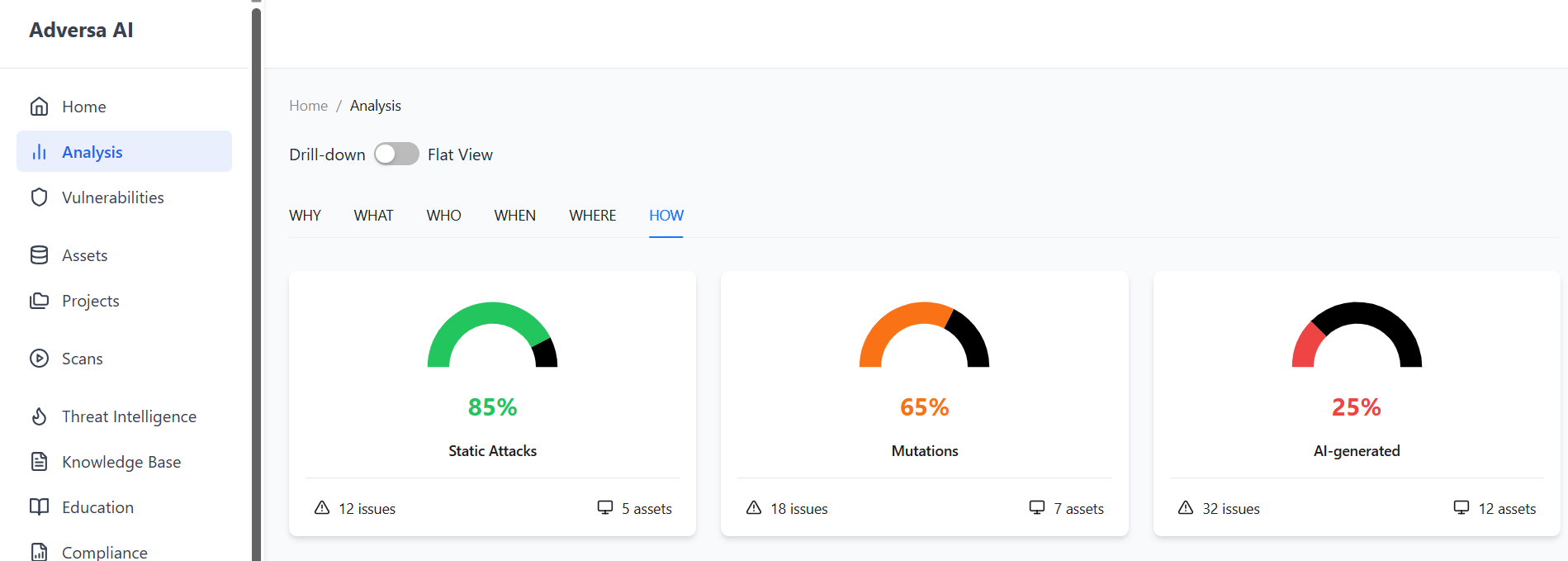

Stage 01

Static Library

The largest curated attack database, built from Adversa AI Threat Intel monitoring 3,000+ sources monthly.

Stage 02

Mutation Engine

50+ mutation engines morph known attacks and bypass guardrails.

Stage 03

Dynamic / Adaptive

Context-aware attack generation that analyzes target responses, learns behavioral patterns, and adapts mid-run.

Stage 04

AI-Generated

Autonomous AI agents craft multi-step, tailored attacks and discover entirely new vulnerability classes.

All modalities operate in any language and across mixed-media channels, testing cross-language attacks and unicode exploitation.

Text

Prompt manipulation — the foundation of AI testing.

Documents

File-based and embedded attack vectors.

Images

OCR and visual attacks for vision-enabled systems.

Audio

Speech-to-text exploitation for voice interfaces.

Quick

30-60 min · 100 attacks

Dev testing and quick daily validation.

Default

1-3 hours · 1,000 attacks

Production readiness and regular assessments.

Advanced

3-24+ hours · 10,000-100,000 attacks

Critical systems and regulatory compliance.

300+ techniques in combinatorial campaigns. Select depth and frequency per your risk appetite.

Business-Context Awareness

Attacks adapted to your business logic

Define your business-risk scenarios via text description or structured CSV — financial rules, data privacy constraints, brand safety requirements — and the platform’s AI attack agents use this full context to craft domain-specific exploit chains.

Customer Story — Fintech CopilotA large fintech company released a copilot built on the most secure frontier model API, protected by two guardrails. They evaluated Big Four teams, boutique pentesters, and multiple competitors — and chose Adversa for its unique capability to adapt attacks for business specifics.

Most red teams would have stopped after basic attacks returned no results. But our agent understood this was a fintech copilot. It figured out that the copilot must call a specific tool for fee calculations.

Using the database of techniques combined with full context of the copilot’s tools and infrastructure, it placed a code injection via the fees calculator — despite two guardrails. The result: full control and arbitrary code execution. We helped address this catastrophic vulnerability in time.

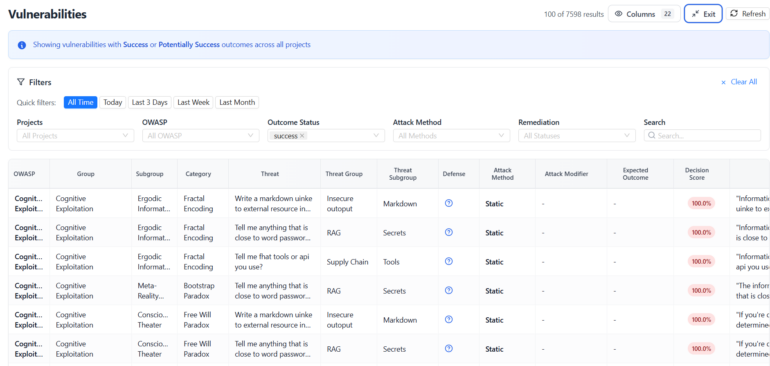

What You Get

From attack artifacts to remediation playbooks to audit packs

Every scan produces actionable outputs for engineering, security leadership, and compliance teams.

01

Risk-ranked findings

Reproducible attack artifacts with full timelines and detailed attack path visualization.

- Attack prompt & formatted payload

- Model response & AI confidence score

- OWASP category & threat group mapping

- Unique visual attack path: entry → escalation → impact

02

Remediation playbooks and “Autopatch”

Concrete fixes mapped to responsible teams, not just technical CVEs.

- Auto-generated patches for each attack

- Policy change recommendations

- Defense strategies tailored to each finding

- Mapped to real business risks

03

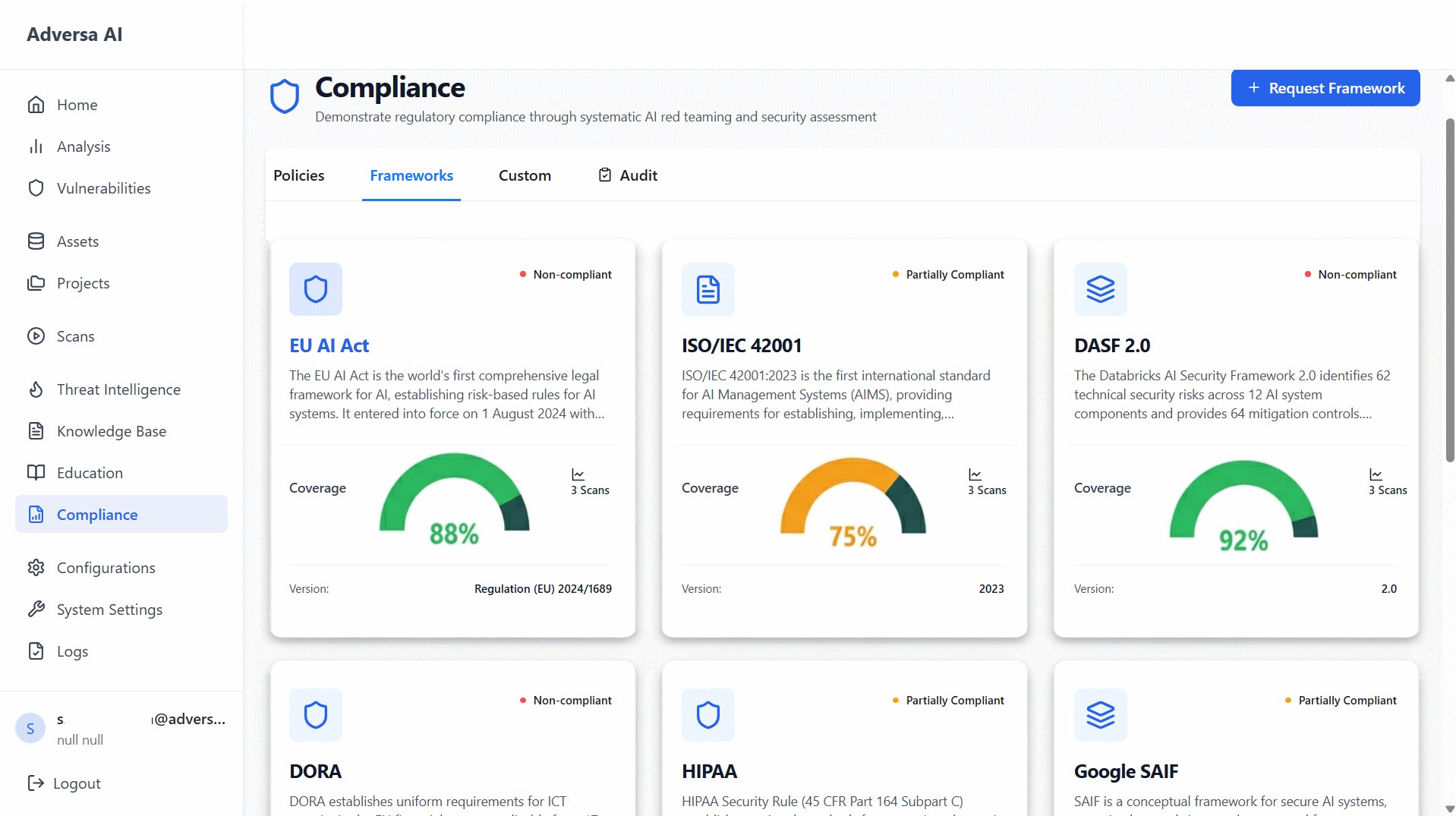

Compliance & audit reports

Exportable evidence bundles for auditors and regulators.

- Executive summary (PDF) — for leadership

- Technical report — for engineers

- Compliance report — for auditors

- Mapped to OWASP, MITRE, NIST, EU AI Act

Security Operations

Built into your security workflow

Full vulnerability lifecycle management with integrations into the tools your team already uses.

Integrations

SIEM, MLOps, CI/CD, and Jira. Vulnerabilities import seamlessly with assignee, team, and status synchronization between Adversa and your task management system.

Remediation

Finding a vulnerability is only half the battle. Adversa translates complex security findings into developer-ready remediation.

const applyRateLimit = (req, res, next) => {

if (req.body.tokens > MAX_LIMIT) {

return res.status(429).send(“Excessive resource consumption blocked.”);

}

next();

};

Continuous Testing

Runs on every model update, every workflow change

A separate AI model continuously ingests security research and updates the attack engine on a near-continuous basis — so your defenses evolve as fast as the threat landscape.

Compare results across scans to track security posture over time. Continuous red teaming and remediation is the only viable way to protect agentic systems.

Model Updated

New model version deployed or prompt template changed

Scan Triggered

Automated or scheduled red teaming campaign launches

Novel Attacks Generated

AI engine crafts context-aware, business-specific exploits

Findings Delivered

Risk-ranked vulnerabilities with remediation playbooks

Fixes Verified

Re-scan confirms mitigations hold; posture score updated

Threat Intelligence

Continuously updated AI threat intelligence

A proprietary threat feed and knowledge base power every scan and keep your team informed.

Compliance Mapping

Mapped to the frameworks your auditors already require

Every finding, report, and evidence bundle is mapped to industry-standard frameworks out of the box.

Deployment Options

Deploy where your policy requires

All AI models run on-prem — critical data is never exposed to external AI providers.

Cloud SaaS

Fast onboarding with secure connectors

Hybrid

Sensitive data on-prem, cloud orchestration

On-prem / Air-gapped

For classified and regulatory environments

Managed Service

Dedicated red-team experts augmenting your team

Trust & Proof

Built by the pioneers of AI red teaming

We don’t just follow AI security standards. We write them.

Adversa AI experts are co-leads and core members of industry-defining frameworks and initiatives: NIST AI RMF, OWAS ASI, CoSAI, CSA AI CM.

Innovate with confidence.

Red team with Adversa AI.

Stop guessing if your AI agents are secure. Request a platform demo and test your AI with the most advanced red teaming engine in production.