Adversa AI wins Artificial Intelligence Excellence award in Safety and Alignment category

Adversa AI won in the Safety and Alignment category, recognized for advancing real-world AI safety through continuous adversarial testing of AI systems.

Agentic AI Security + Article Sergey todayApril 16, 2026

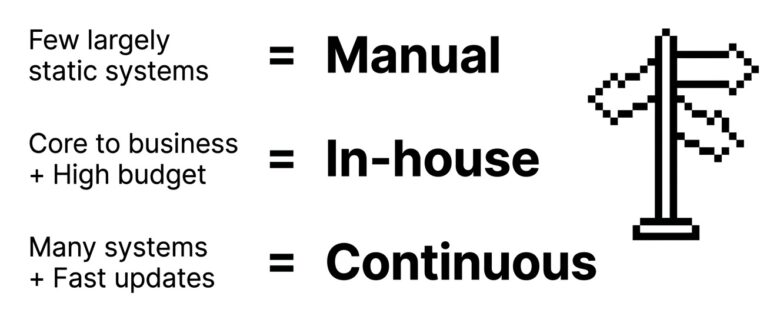

Three models exist for red teaming of AI systems: manual assessments, in-house capability, and continuous platform-based testing. Choosing the wrong one leaves gaps in coverage, evidence, or both. Here is how to evaluate them against what your environment actually requires, and how to choose the model that sustains as AI usage grows.

AI systems do not behave like static applications. Prompts get updated. Models get swapped. Orchestration layers shift, new connectors appear, retrieval pipelines get retuned, and agent permissions expand with each new integration. Any of these changes can introduce attack surface that did not exist a week before.

A testing model designed for an annual control review may not hold up in that environment. The question is not “did we test this system?” A mature security program needs to account for how much of the real-world attack surface gets tested, how often, and with what evidence.

Manual red teaming is still the default starting point for most organizations. A specialist firm evaluates one or more AI systems over a defined engagement window and delivers findings with severity ratings and remediation guidance.

It works well for pre-launch validation, independent review before a major release, and bespoke adversarial testing of sensitive scenarios. Customers and regulators value the third-party artifact. Where it runs into trouble is when the environment moves fast. Frequent product changes, multiple AI systems in production, ongoing retesting needs, and repeated evidence collection all strain the point-in-time model. What was tested several weeks ago may no longer reflect what is running.

Another major caveat is that the external team must specialize in AI systems and demonstrate this niche expertise beforehand. Generic red teamers are unlikely to test all attack surfaces and AI-specific vectors.

Building an internal capability makes sense for organizations with a large AI footprint, mature security engineering capacity, and budget for specialist hiring. The advantages are real: close alignment with development teams, control over methods and priorities, and fully customized testing. So are the challenges. Recruiting multidisciplinary specialists is difficult. Retaining them is harder. Keeping pace with evolving attack techniques while building tooling around open-source tools is an ongoing operational burden. An internal team can be highly effective, but it is usually the most demanding path to sustain.

A continuous platform approach makes AI security testing more repeatable and systematic. Instead of periodic assessments alone, teams can test across releases, against recurring attack patterns, on a scheduled basis, and in some cases as part of broader security or ML pipelines.

This model works best where there are multiple production AI systems, high change velocity, customer-facing applications, or regulated use cases requiring ongoing evidence. The limit: automation does not replace expert judgment. And if no internal process exists to triage and act on findings, output accumulates without driving remediation. Continuous approaches are strongest when they improve testing cadence and coverage, not when they are expected to substitute for targeted expert review.

Coverage is where the differences between models become most visible.

A manual engagement can go deep on selected scenarios, but scope is constrained by time and the number of prompts, flows, models, and integrations that can be explored in the engagement window. An internal team can expand that scope if it has sufficient capacity and tooling. A continuous platform can run repeatable testing across prompt injection, jailbreaks, policy evasion, data leakage, unsafe tool use, agent behavior, and application-specific failure modes.

That does not mean automation finds everything. It means a continuous approach tends to be better suited for systematic exploration across surfaces that keep changing. The useful internal question: are you optimizing for a high-quality snapshot, or for broader ongoing coverage? Most mature programs need some of both.

Testing frequency should reflect how volatile the system is.

If an AI system changes rarely, periodic assessment may be sufficient. If prompts, tools, retrieval logic, or orchestration change frequently, annual or biannual testing can leave extended periods where the deployed system differs meaningfully from the tested one. That gap matters most for AI applications tied to sensitive data, agentic workflows, decision-support systems, customer-facing assistants, and regulated use cases.

A rough calibration: low change and low criticality often supports periodic manual review. Moderate change or exposure calls for periodic review plus scheduled retesting. High change and high exposure is where continuous testing earns its place. The goal is to align testing cadence with how the system actually operates.

Major caveat: AI is being deployed faster than security teams can track it. Most organizations are running more agents than their CISO team knows about.

Each model shifts the internal burden differently.

Manual testing requires less internal specialization to start, but scoping, coordination, review, remediation ownership, and retesting still need someone to own them. An in-house program gives you the most control at the heaviest cost: security engineering talent, AI and LLM expertise, test development, tooling operations, and ongoing program management. A continuous platform reduces what you need to build from scratch, but it does not remove the need for owners, triage workflows, remediation processes, and governance.

The right question is not just what a subscription or engagement costs. It is what level of internal effort each model assumes, and whether your organization is positioned to provide it.

For a growing number of organizations, AI security testing is also an assurance function. Security leaders need evidence showing what systems were tested, what scenarios were used, what failed, what was remediated, and how testing repeats over time. That need is most acute in financial services, insurance, healthcare, and the public sector, and increasingly relevant for any organization preparing for AI-specific regulatory scrutiny.

A manual assessment provides a strong independent artifact. A continuous model strengthens evidence of ongoing process. These are different kinds of value: one demonstrates external expert review, the other demonstrates sustained operational discipline. Many organizations will need both.

The temptation is to compare line-item spend: consulting fees versus headcount versus subscription cost. That comparison rarely tells you much.

The more useful question is what level of coverage, cadence, and evidence each dollar buys. A manual assessment may be cost-effective for a narrow, high-stakes launch. An internal team may be justified for a very large AI estate. A continuous platform may be the most efficient option where systems change frequently and require repeated testing. The best model is not the cheapest on paper but the one that fits the organization’s risk exposure, release velocity, staffing reality, and assurance obligations.

For most enterprises, the long-term answer is not one model in isolation. The pattern that tends to emerge in mature programs: continuous testing provides broad, repeatable coverage; targeted manual engagements go deep on specific scenarios; internal teams own remediation, governance, and prioritization. That combination reflects the reality that AI assurance is both a security testing problem and an operating model problem.

If your team is choosing between manual, in-house, and continuous AI red teaming, these six questions are a practical starting point:

Those answers will usually clarify the tradeoffs better than any generic market comparison.

Written by: Sergey

Industry Awards admin

Adversa AI won in the Safety and Alignment category, recognized for advancing real-world AI safety through continuous adversarial testing of AI systems.

(c) Adversa AI, 2026. Continuous red teaming of AI systems, trustworthy AI research & advisory

Privacy, cookies & security compliance · Security & trust center