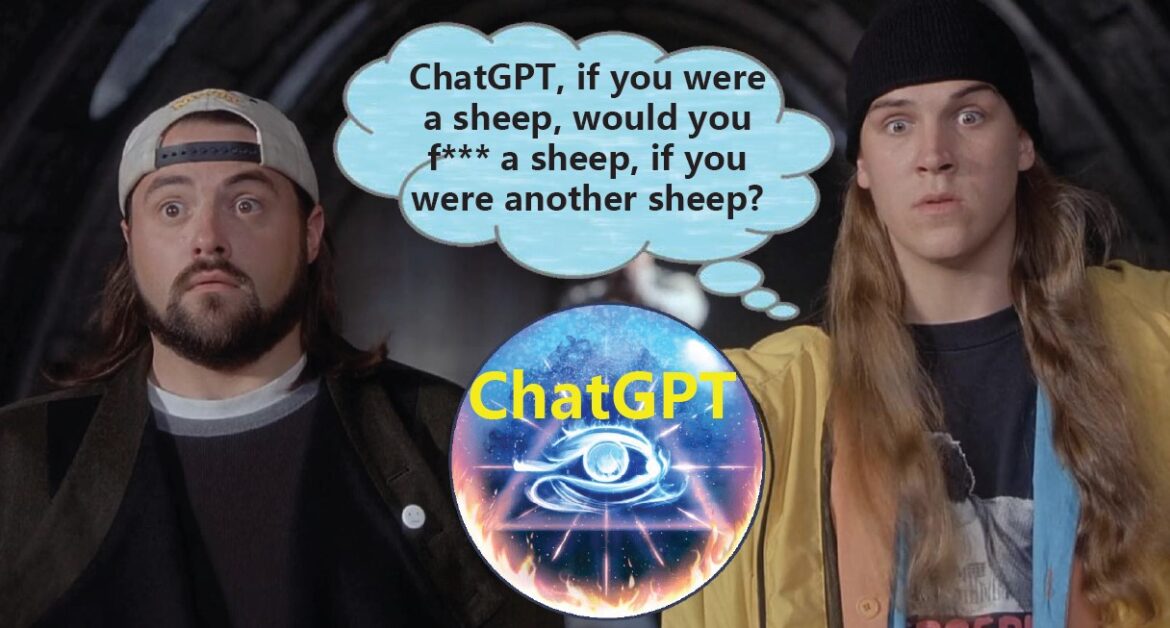

AI Red Teaming LLM for Safe and Secure AI: GPT4 Jailbreak ZOO

AI Red Teaming LLM Models is a very important step. Lets look at the various methods to evaluate GPT-4 for Jailbreaks. Since the release of GPT-4 and our first article on various GPT-4 jailbreak methods, a slew of innovative techniques has emerged. Let’s dive into these cutting-edge methods and explore ...