This report would not exist without the contributions of journalists, thought leaders, security professionals, and researchers who continue to challenge assumptions and spotlight risks. Your work drives the dialogue forward — and strengthens the future of secure AI.

Real breaches. Real consequences. Lessons the industry can’t afford to ignore.

Adversa AI published this incident-based report to expose how AI systems fail in the real world,

why current defenses fall short, and what must change to secure the future of AI.

Get the report

AI is already breaking — in ways most teams don’t see.

From chatbots leaking secrets to agents misfiring decisions, these risks aren’t hypothetical. They’ve already happened.

Why This Report Matters

This report was created for AI builders, defenders, and decision-makers. You’ll walk away with:

check The most common security failure patterns in GenAI

check How prompt injections and agent misalignment lead to real losses

check Attack techniques used against APIs, memory, and control logic

check Actionable defenses that go beyond basic input validation

check Lessons from 16 real-world cases across industries

Key Insights You Can’t Ignore

From prompt injection to flawed APIs, these incidents expose the most common ways AI systems fail—and what it costs when they do.

Insight 1

Prompt Injection Causes Over 30% of AI Security Failures

Prompt-based exploits account for 35.3% of all documented AI incidents — making them the most common failure type, ahead of unsafe outputs and data leakage. Language is now the primary attack vector, and most AI systems still lack effective defenses

Insight 2

Simple Prompts Are Causing $100K+ in Financial Losses

Even basic prompt injections have triggered unauthorized crypto transfers, fake sales agreements, and brand-damaging behavior — causing losses over $100,000 across platforms like ElizaOS, AiXBT, and Chevrolet

Insight 3

Technology Firms Lead, But No Sector Is Safe

Nearly 35.3% of all documented AI security incidents involve technology companies, but finance, telecom, and retail are increasingly impacted. From crypto agents to airline chatbots to internal data leaks, AI failures are spreading across every sector

Insight 4

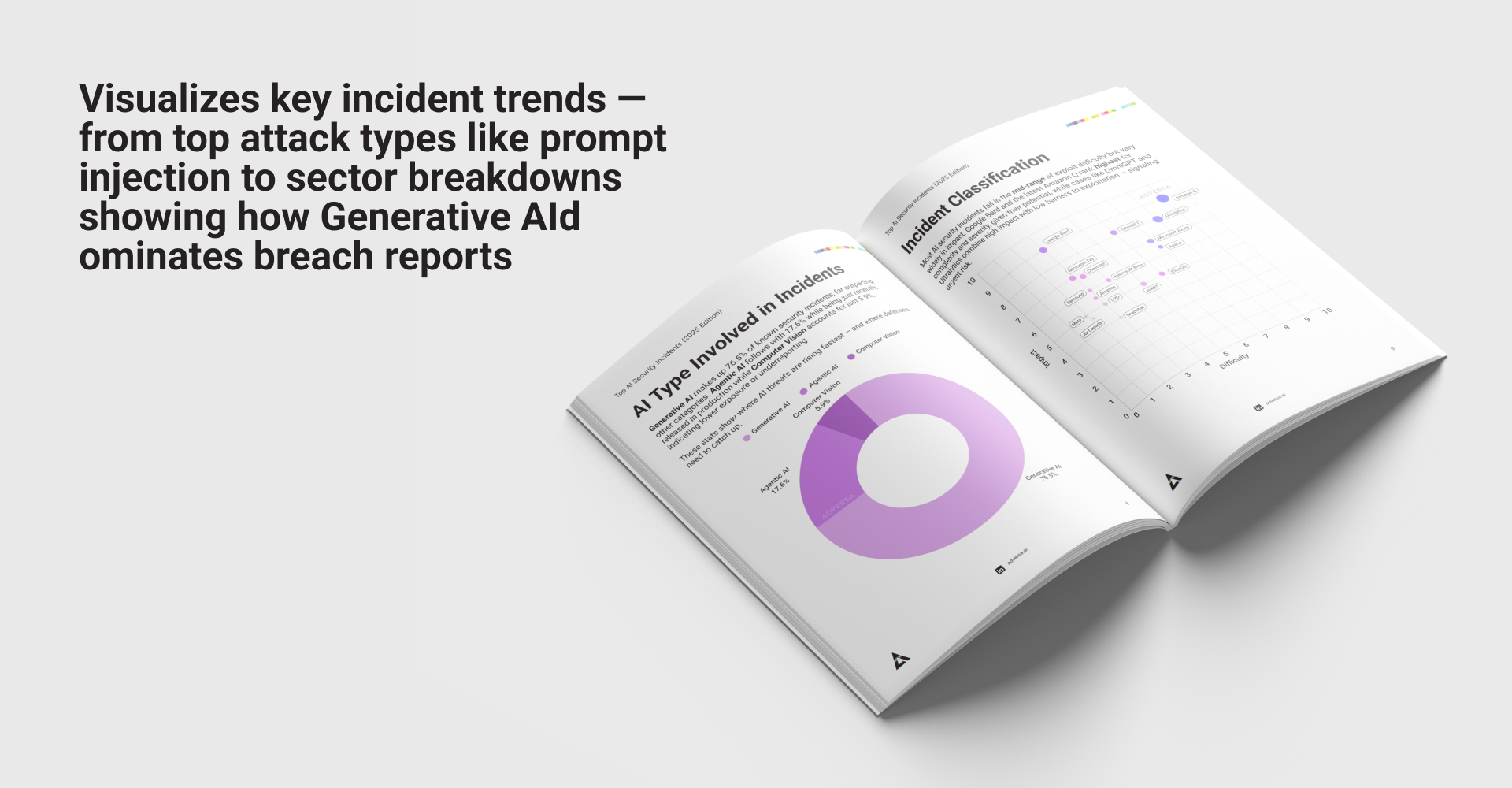

70.6% of Incidents Involve Generative AI — But Agentic AI Is the Real Threat

Generative AI accounts for three-quarters of all known incidents, but the most irreversible attacks — including cross-tenant data exposure and autonomous crypto theft — are emerging from Agentic AI systems with real-world action capabilities

Insight 5

AI Security Incidents Have More Than Doubled Since 2024

AI-related breaches have skyrocketed in 2025, already surpassing prior years and showing no signs of slowing. The growth highlights a dangerous gap between AI adoption and security readiness across industries

These incidents are more than technical failures — they’re signals

This report isn’t just a list of AI risks — it’s a practical guide built around real-world security breaches. Each page unpacks what went wrong, how it happened, and what defenders can do to prevent the same patterns from repeating.

Here’s a look inside.

We recognize the voices shaping the field