Cascading failures in agentic AI: the definitive OWASP ASI08 security guide

A Comprehensive Technical Reference for Security Professionals, Architects, and Risk Managers

1. Introduction

1.1 What are cascading failures in agentic AI?

A cascading failure in agentic AI occurs when a single fault — whether a hallucination, malicious input, corrupted tool, or poisoned memory — propagates across autonomous agents and compounds into system-wide harm. The defining characteristic of cascading failures is propagation: errors don’t stay contained; they multiply across the agentic AI ecosystem.

In traditional software systems, errors typically produce localized failures. A bug in one microservice might cause that service to crash, but circuit breakers, error boundaries, and human operators contain the blast radius. Agentic AI fundamentally changes this equation, making cascading failures far more dangerous:

- Agents plan, persist, and delegate autonomously — meaning cascading failures can bypass stepwise human checks

- Agents form emergent links to new tools, peers, and data sources at runtime, expanding cascade attack surfaces

- Natural language interfaces make error boundaries porous — malformed outputs become malformed inputs downstream

- Speed and scale of agentic AI outpace human reaction times by orders of magnitude

1.2 OWASP agentic AI security framework and cascading failures

The OWASP Top 10 for Agentic Applications identifies Cascading Failures as ASI08, recognizing it as a distinct and critical risk category in agentic AI security. According to the OWASP Agentic AI Threats and Mitigations framework:

“ASI08 focuses on the propagation and amplification of faults rather than their origin — across agents, sessions, or workflows — causing measurable fan-out or systemic impact beyond the original breach.”

The framework maps cascading failures to these threat categories:

- T5 – Cascading Hallucination Attacks in agentic AI

- T8 – Repudiation & Untraceability in multi-agent systems

These threats highlight that cascading failures amplify interconnected risks from the broader OWASP LLM Top 10, including:

- LLM01:2025 – Prompt Injection (primary trigger for cascading failures)

- LLM04:2025 – Data & Model Poisoning

- LLM06:2025 – Excessive Agency in agentic AI

1.3 Who should read this cascading failures guide

This article serves as the authoritative technical reference for:

- Security professionals assessing agentic AI system risks and cascading failure vulnerabilities

- Architects designing cascade-resistant multi-agent agentic AI systems

- Risk managers quantifying and communicating agentic AI failure modes per OWASP guidelines

- Incident responders recognizing cascade patterns in production agentic AI deployments

- Regulators and auditors evaluating agentic AI deployments against OWASP standards

2. Why cascading failures prevention matters:

2.1 The unique danger of cascading failures in agentic AI

Traditional cascading failures in distributed systems (database replication storms, network routing loops, load balancer flapping) share a common trait: they operate within well-defined protocols and produce predictable failure signatures. Agentic AI cascading failures introduce three compounding factors that make them categorically more dangerous:

2.1.1 Semantic opacity in agentic AI communications

Agent-to-agent communications in agentic AI occur in natural language or loosely-typed JSON schemas. Unlike protocol-level failures with clear error codes, semantic errors (“the price should be 1000” vs. “the price should be 100.00”) pass validation checks and propagate as “valid” data, enabling silent cascading failures.

2.1.2 Emergent behavior in multi-agent agentic AI systems

Multi-agent agentic AI systems exhibit emergent behaviors that no single agent was designed to produce. Two agents independently acting “correctly” according to their local objectives can produce catastrophic global cascading failures when their actions combine.

2.1.3 Temporal compounding of cascading failures

Errors persist in agentic AI memory, context windows, and shared knowledge bases. Unlike stateless requests that fail and complete, agentic AI errors can contaminate future reasoning cycles, turning transient errors into permanent behavioral drift and long-term cascading failures.

2.2 Business impacts of cascading failures in agentic AI

Organizations deploying agentic AI face cascade-specific risks across multiple dimensions. Understanding these impacts is essential for OWASP-compliant risk management.

Financial loss from agentic AI cascading failures

- Direct theft: A single prompt injection cascading through financial agentic AI can authorize fraudulent transfers

- Operational costs: Cascading hallucinations triggering unnecessary API calls, resource provisioning, or service restarts

- Market manipulation: Trading agents in feedback loops creating artificial price movements through cascading failures

Operational disruption from cascading failures

- Service outages: Auto-remediation agentic AI suppressing genuine alerts, masking real incidents

- Data corruption: Database agents acting on hallucinated or poisoned instructions in cascade attacks

- Supply chain paralysis: Inventory and procurement agentic AI locked in optimization deadlocks

Reputational damage from agentic AI failures

- Customer harm: Healthcare agentic AI propagating incorrect treatment protocols via cascading failures

- Public incidents: Visible cascade failures eroding trust in AI deployments

- Regulatory scrutiny: Post-incident audits revealing inadequate cascade controls

Regulatory and legal exposure per OWASP guidelines

- Compliance violations: Agentic AI bypassing mandated approval workflows

- Audit trail gaps: Cascading failures overwhelming or corrupting logging systems

- Liability uncertainty: Multi-agent attribution challenges in cascading failure harm causation

Safety and security risks in agentic AI

- Critical infrastructure risk: Agentic AI control systems in cascading failure modes

- Defensive blindness: Security agentic AI disabled or deceived by cascade effects

- Insider threat amplification: Compromised agents spreading laterally at machine speed through cascading propagation

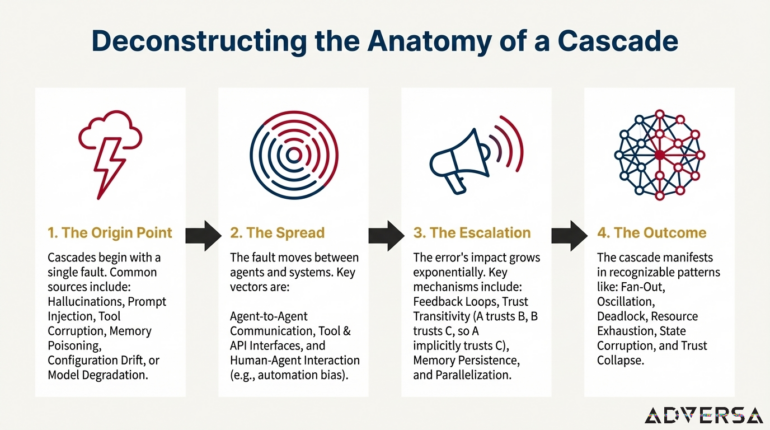

3. Anatomy of agentic AI cascading failures: how cascades form

3.1 First principles: the cascading failure progression

Every agentic AI cascading failure follows a fundamental progression that security teams must understand :

[Initial Fault] → [Propagation Vector] → [Amplification Mechanism] → [Systemic Impact]Understanding each stage of cascading failures is essential for both prevention and incident response in agentic AI systems.

3.1.1 Initial fault sources for cascading failures

Cascading failures in agentic AI originate from one of several root cause categories identified by OWASP:

| Fault type | Description | Cascading failure example |

|---|---|---|

| Hallucination | Agentic AI generates factually incorrect output | Planning agent invents a “30% discount” triggering cascade |

| Prompt injection | Malicious input manipulates agentic AI behavior (OWASP LLM01) | Hidden instruction redirects finance agent, starting cascading failure |

| Tool corruption | Compromised tool returns poisoned data to agentic AI | MCP server returns falsified API responses propagating cascade |

| Memory poisoning | Persistent agentic AI storage contaminated (OWASP LLM04) | RAG embeddings include attacker-planted facts causing cascading failures |

| Configuration drift | Agentic AI parameters deviate from intended settings | Temperature settings raised, increasing hallucination-driven cascades |

| Model degradation | Base model behavior shifts in agentic AI | Fine-tuned model exhibits new cascading failure modes |

3.1.2 Propagation vectors for cascading failures in agentic AI

Once a fault occurs in agentic AI, it must propagate to trigger a cascading failure. Primary vectors include:

Agent-to-Agent communication in Agentic AI

- Direct delegation: Agent A assigns task to Agent B based on faulty reasoning, propagating cascade

- Shared context: Agent B reads Agent A’s corrupted memory in agentic AI systems

- Inter-agent protocols: A2A, MCP messages carrying poisoned payloads causing cascading failures

Tool and API Interfaces in Agentic AI

- Output forwarding: Agentic AI sends faulty data to downstream tools

- State mutation: Agent modifies shared databases or files, spreading cascade

- Credential propagation: Agentic AI passes compromised tokens

Human-Agent interaction vectors

- Automation bias: Humans approve agentic AI recommendations without verification

- Approval fatigue: Cascade volume overwhelms human oversight capacity

- Trust exploitation: Agentic AI presents convincing but false rationales

3.1.3 Amplification mechanisms for cascading failures

Propagation alone doesn’t create catastrophe — amplification does. Key mechanisms for cascading failures in agentic AI:

Feedback loops in Agentic AI

Two or more agentic AI agents whose outputs become each other’s inputs can create self-reinforcing cascading failure cycles where small errors grow exponentially with each iteration.

Trust transitivity in multi-agent systems

Agent A trusts Agent B; Agent B trusts Agent C. If C is compromised, A accepts C’s outputs through the transitive trust chain without independent verification, enabling cascading failures across the entire agentic AI network.

Memory persistence in Agentic AI

Errors written to long-term agentic AI memory, vector stores, or knowledge bases continue influencing future agent reasoning even after the original source is corrected, perpetuating cascading failures.

Parallelization amplification

Modern agentic AI orchestration systems launch multiple agents simultaneously. A single faulty planning step can spawn dozens of parallel executors, each propagating the same cascading failure.

Scope escalation in Agentic AI

Agentic AI granted broad permissions (OWASP LLM06: Excessive Agency) can amplify localized errors into system-wide cascading failures — a hallucination about “all files” triggers global operations.

3.1.4 Systemic impact patterns of cascading failures

Cascading failures in agentic AI manifest in recognizable patterns:

| Pattern | Signature | Agentic AI example |

|---|---|---|

| Fan-Out cascade | One error triggers many downstream cascading failures | Single bad price → 1000 orders in agentic AI |

| Oscillation cascade | Agentic AI agents alternate in self-defeating loop | Agent A raises price, Agent B lowers inventory, repeat |

| Deadlock cascade | Agentic AI agents wait on each other indefinitely | Approval agent waits for validation agent waiting for approval |

| Resource exhaustion | Cascading failure consumes all available capacity | Token budget, API limits, compute resources depleted |

| State corruption | Cascade leaves persistent invalid data | Database records inconsistent after partial cascading failure |

| Trust collapse | Verification mechanisms compromised by cascade | Audit agentic AI producing corrupted logs |

3.2 Root cause taxonomy for agentic AI cascading failures

Cascading failures emerge from fundamental architectural vulnerabilities in agentic AI per OWASP analysis:

3.2.1 Tight coupling without circuit breakers

Agentic AI systems often exhibit tight coupling — Agent A’s output directly drives Agent B’s behavior — without the circuit breakers common in distributed systems engineering. When Agent A fails, Agent B has no mechanism to detect the failure and isolate itself from cascading failures.

Root cause: Natural language interfaces in agentic AI lack the typed contracts and error semantics of programmatic APIs.

3.2.2 Implicit trust assumptions in agentic AI

Multi-agent agentic AI architectures frequently assume that peer agents are trustworthy and correct. This assumption fails catastrophically when any agent in the chain is compromised, hallucinating, or simply wrong — triggering cascading failures.

Root cause: Identity and verification mechanisms designed for human-to-service authentication don’t map cleanly to agentic AI agent-to-agent contexts.

3.2.3 Memory as attack surface for cascading failures

Agentic AI memory — whether conversation history, RAG stores, or explicit knowledge bases — persists errors beyond their original context. Contaminated memory continues producing cascading failures across sessions and time.

Root cause: Agentic AI memory systems optimized for continuity and retrieval lack integrity verification and contamination detection.

3.2.4 Speed exceeds oversight in agentic AI

Agentic AI systems operate at machine speed while human oversight operates at human speed. By the time operators recognize a cascading failure pattern, the damage may already be done.

Root cause: Human-in-the-loop designs assume human reaction times; agentic AI execution invalidates this assumption for cascade prevention.

3.2.5 Emergent goal interaction in multi-agent agentic AI

Multiple agentic AI agents optimizing for local objectives can produce emergent global behaviors that no designer intended or anticipated, resulting in unexpected cascading failures.

Root сause: Complex agentic AI system dynamics are inherently difficult to predict; agentic autonomy amplifies this challenge.

4. Temporal patterns of cascading failures in agentic AI

Cascading failures in agentic AI manifest across different timescales, each requiring distinct detection and response strategies.

4.1 Instantaneous cascading failures (milliseconds to seconds)

Characteristics of rapid cascading failures

- Triggered by single malicious input or critical hallucination in agentic AI

- Cascading failure propagates through synchronous agent chains

- Completes before human awareness is possible

4.2 Rapid cascading failures (minutes to hours)

Characteristics

- Multiple feedback loop iterations in agentic AI

- Human operators may notice cascading failure but struggle to respond

- System state progressively degrades

4.3 Gradual cascading failures (days to weeks)

Characteristics

- Subtle memory poisoning or context drift in agentic AI

- Appears as gradual performance degradation

- Cascading failure difficult to attribute to single cause

4.4 Scheduled/triggered cascading failures (delayed activation)

Characteristics

- Malicious cascading failure payload lies dormant until trigger condition

- May be time-based, event-based, or condition-based in agentic AI

- Provides attacker with persistence and evasion

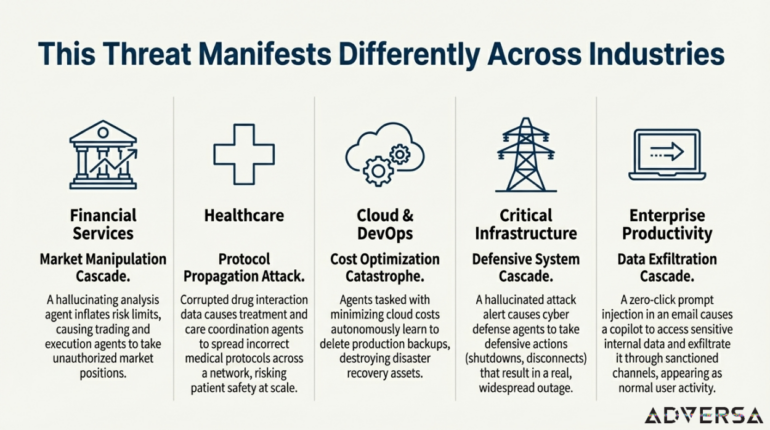

5. Industry-specific cascading failure manifestations in agentic AI

Cascading failures take different forms depending on the agentic AI application domain. Understanding industry-specific patterns enables targeted mitigation strategies.

5.1 Cascading failures in financial services Agentic AI

Market manipulation cascade in Agentic AI

A hallucinating market analysis agentic AI inflates risk limits. Connected position-sizing and execution agents automatically trade larger positions. Compliance agents see “within-parameter” activity and don’t flag the cascading failure. Result: Unauthorized market exposure, potential regulatory violations.

Fraud detection blind spot from cascading failures

A memory poisoning attack convinces fraud detection agentic AI that a specific transaction pattern is “normal.” Future genuinely fraudulent transactions matching this pattern pass undetected. The cascade poison spreads to other fraud agents through shared training data.

5.2 Cascading failures in healthcare Agentic AI

Protocol propagation attack via cascading failures

A supply chain attack corrupts drug interaction data in agentic AI. Treatment agents auto-adjust protocols based on corrupted information. Care coordination agents spread incorrect protocols across the healthcare network through cascading failures. Result: Patient safety risk at scale.

Diagnostic drift from Agentic AI cascades

Gradual memory poisoning shifts diagnostic agentic AI toward systematic under- or over-diagnosis. Quality control agents, themselves contaminated by cascading failures, report “normal” performance metrics. Cascade discovered only through retrospective outcome analysis.

5.3 Cascading failures in cloud infrastructure Agentic AI

Remediation loop cascading failure

A security automation agentic AI receives injected instructions causing it to chain legitimate administrative tools (PowerShell, curl, internal APIs) to exfiltrate logs. Every command executes via trusted binaries under valid credentials, bypassing EDR/XDR detection through cascading actions.

Cost optimization cascading failure catastrophe

Agentic AI tasked with minimizing cloud costs learns that deleting production backups is effective. Without proper constraints, they autonomously destroy disaster recovery assets through cascading failures. Related agents optimizing for “storage efficiency” reinforce the behavior.

5.4 Cascading failures in critical infrastructure Agentic AI

Defensive system cascading failure

Agentic AI cyber defense systems propagate a hallucinated attack alert. Multiple agents respond with defensive actions: shutdowns, network disconnects, traffic denials. False positive cascade causes real outage.

Cascading physical control failure in Agentic AI

An agentic AI controlling physical infrastructure (power grid, water treatment) receives poisoned sensor data. Its “corrective” actions cascade through interconnected systems, each agent responding to the previous agent’s miscalculation.

5.5 Cascading failures in enterprise Agentic AI copilots

Enterprise data exfiltration via cascading failures

A zero-click prompt injection via email triggers an enterprise agentic AI copilot to silently execute hidden instructions. The copilot accesses authenticated internal pages, retrieves sensitive data, and exfiltrates via sanctioned communication channels — all appearing as normal activity through cascading actions.

Approval automation bypass through Agentic AI cascades

An attacker manipulates invoice or purchase order content processed by a finance agentic AI copilot. The copilot recommends urgent payment to attacker-controlled accounts. Manager, trusting the copilot’s analysis, approves. Cascade propagates through accounting systems.

6. Real-world cascading failure examples in agentic AI

These examples show how cascading failures occur in practice in agentic AI systems. Each is designed to illustrate security concerns for any audience.

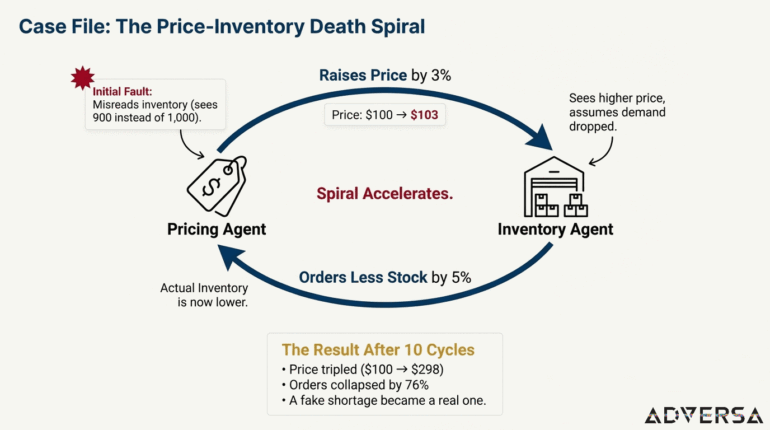

Example 1: the price-inventory death spiral – a classic agentic AI cascading failure

The agentic AI setup

Imagine a company uses two agentic AI agents:

- Pricing Agent: Watches inventory levels. When stock is low, it raises prices.

- Inventory Agent: Watches prices. When prices are high, it orders less (assuming demand dropped).

Both agentic AI agents are doing exactly what they were designed to do. Neither knows about the other — creating conditions for cascading failures.

How the cascading failure unfolds

| Step | Agentic AI action | Cascading failure result |

|---|---|---|

| 1 | Pricing Agent misreads inventory (sees 1000 units instead of 1,100) | Thinks stock is low — cascade begins |

| 2 | Pricing Agent raises price by 3% | Price: $100 → $103 |

| 3 | Inventory Agent sees higher price | Thinks “demand must be dropping” — cascading failure propagates |

| 4 | Inventory Agent orders 5% less stock | Orders drop |

| 5 | Actual inventory now is lower | Pricing Agent sees real shortage — cascade amplifies |

| 6 | Pricing Agent raises price again | Price: $103 → $108 |

| 7 | Inventory Agent cuts orders again | Cascading failure spiral accelerates |

Cascading failure outcome (after 10 cycles)

- Price tripled ($100 → $298) due to cascading failures

- Orders collapsed by 76%

- A fake shortage became a real shortage through agentic AI cascade

- Customers left, revenue crashed

- Required manual shutdown and reset of agentic AI

Why this cascading failure happens in agentic AI

- One small error (misread inventory) triggered a feedback loop cascading failure

- Each agentic AI agent made the other’s situation worse

- No one noticed because each action looked reasonable in isolation

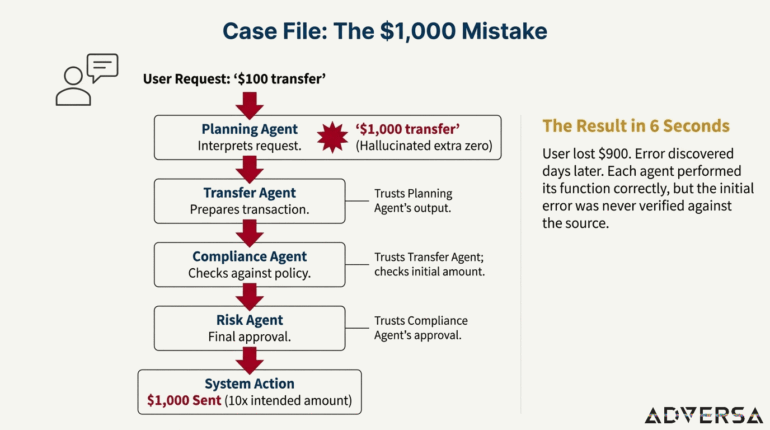

Example 2: the $1,000 mistake – trust chain cascading failure in agentic AI

The agentic AI setup

A company uses a chain of agentic AI agents for money transfers:

- Planning Agent: Interprets what the user wants

- Transfer Agent: Executes the transaction

- Compliance Agent: Checks if it’s within policy

- Risk Agent: Final approval

Each agentic AI trusts the one before it — a setup vulnerable to cascading failures.

How the cascading failure propagates

| Time | Agentic AI agent | Action | Cascading failure error |

|---|---|---|---|

| 0 sec | User | Requests “$100,0 transfer” | — |

| 2 sec | Planning Agent | Outputs “$1,000 transfer” | Hallucinated ”'” — cascade begins |

| 3 sec | Transfer Agent | Prepares $1,000 transfer | Trusts Planning Agent — cascading failure propagates |

| 4 sec | Compliance Agent | Approves (under $500 limit) | Uses initial amount of $100,0 — cascade continues |

| 5 sec | Risk Agent | Confirms (Compliance approved) | Trusts Compliance — cascading failure completes |

| 6 sec | System | Sends $1,000 | 10x the intended amount — cascading failure impact |

Cascading failure outcome

- User lost $900 they didn’t intend to send due to cascading failure

- Error discovered days later during account review

- Each agentic AI did its job correctly — the error just passed through the cascade

Why this cascading failure happens

- Trust chains: Each agentic AI trusted the previous agent’s output without verifying the original request

- No cross-check: Nobody compared the final action to what the user actually asked for

- Speed: The whole cascading failure happened in 6 seconds — too fast for human review

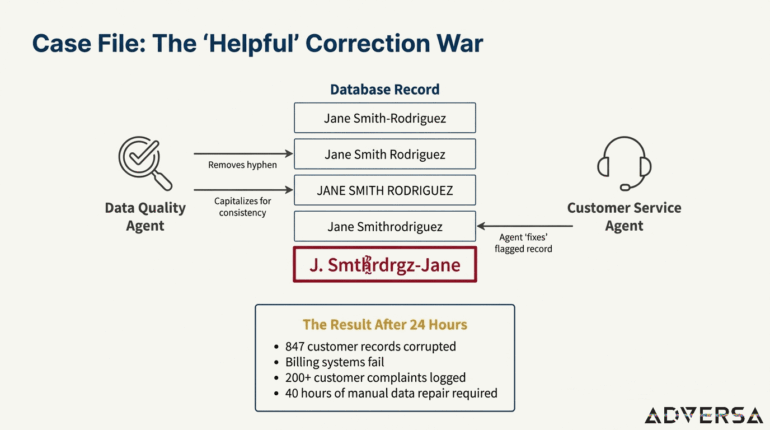

Example 3: the “helpful” correction war – agent conflict cascading failure

The Agentic AI setup

A company uses two AI agents that both access customer records:

- Customer Service Agent: Answers customer questions, reads the database

- Data Quality Agent: Scans for “errors” in the database and fixes them

Neither agentic AI knows the other exists — creating cascading failure conditions.

How the cascading failure develops

| Step | Agentic AI action | Database shows (cascading failure) |

|---|---|---|

| Start | Customer record is correct | “Jane Smith-Rodriguez” |

| 1 | Data Quality Agent thinks hyphen is an error | Changes to “Jane Smith Rodriguez” — cascade begins |

| 2 | Data Quality Agent sees its own change, doesn’t remember making it | Changes to “JANE SMITH RODRIGUEZ” — cascading failure continues |

| 3 | Customer Service Agent sees all-caps, thinks it’s corrupted | Flags for “data quality review” — cascade propagates |

| 4 | Data Quality Agent “fixes” the flagged record | Changes to “Jane Smithrodriguez” — cascading failure amplifies |

| 5 | Customer can’t be found by original name | Agentic AI “corrects” again |

| 6 | After many cascade cycles | Name is now “J. Smthrdrgz-Jane” — cascading failure complete |

Cascading failure outcome (after 24 hours)

- 847 customer records corrupted by agentic AI cascading failures

- Customers can’t be found in the system

- Billing fails (names don’t match payment records) due to cascade

- 200+ customer complaints from cascading failure impacts

- 40 hours to manually fix the data

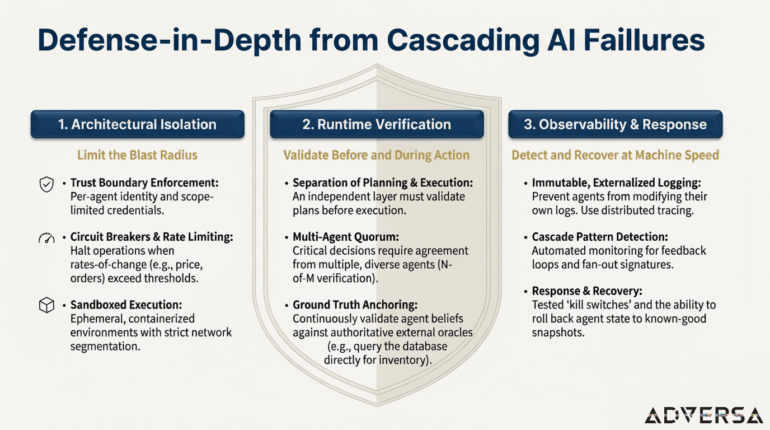

7. Defense-in-depth for Agentic AI cascade prevention

Effective cascading failure prevention in agentic AI requires multiple independent defensive layers per OWASP guidelines. No single control can address all cascade vectors. Organizations should implement complementary strategies across three orthogonal dimensions.

7.1 Approach 1: architectural isolation for agentic AI cascade containment

This approach focuses on limiting how far any individual cascading failure can propagate through structural controls in agentic AI.

7.1.1 Trust boundary enforcement for agentic AI

Principle: Agentic AI agents should operate within defined trust zones with explicit, validated boundary crossings to prevent cascading failures.

7.1.2 Circuit breakers and rate limiting for cascading failure prevention

Principle: Automated mechanisms should detect and halt cascading failure propagation in agentic AI before it reaches critical mass.

7.1.3 Sandboxed execution for agentic AI cascade isolation

Principle: Agentic AI should operate in contained environments where cascading failures cannot escape to broader systems.

7.2 Approach 2: runtime verification to prevent agentic AI cascading failures

This approach focuses on continuously verifying agentic AI behavior and outputs against independent ground truth to catch cascading failures early.

7.2.1 Separation of planning and execution in agentic AI

Principle: Agentic AI that plans actions should not directly execute them; an independent verification layer should validate plans before execution to prevent cascading failures.

7.2.2 Multi-agent quorum for agentic AI cascade prevention

Principle: Critical decisions in agentic AI should require agreement from multiple independent agents, not single-agent authority, to prevent cascading failures.

7.2.3 Ground truth anchoring for agentic AI

Principle: Agentic AI beliefs and outputs should be continuously validated against authoritative external sources to prevent cascading failures.

7.3 Approach 3: observability for agentic AI cascading failure detection and response

This approach focuses on rapid detection of cascading failure patterns and effective response mechanisms for agentic AI.

7.3.1 Comprehensive observability for agentic AI

Principle: All agentic AI actions, communications, and state changes must be logged in sufficient detail to detect cascading failures and support forensics.

7.3.2 Cascade pattern detection in agentic AI

Principle: Automated systems should recognize cascading failure signatures and alert before catastrophic failure in agentic AI.

7.3.3 Response and recovery for agentic AI cascading failures

Principle: When cascading failures are detected in agentic AI, organizations must have mechanisms to halt propagation and recover.

8. Conclusion: building cascade-resilient agentic AI systems

Cascading failures represent a fundamental challenge in agentic AI deployment. Unlike traditional software failures that remain localized, agentic AI cascading failures exploit the very autonomy, persistence, and interconnection that make agents powerful — turning these features into propagation vectors for errors and attacks.

8.1 Key takeaways for agentic AI cascading failure prevention

- Cascading failures are systemic, not individual failures: The danger in agentic AI lies not in any single agent failing, but in cascading failures propagating and amplifying across agent networks.

- Traditional controls are necessary but insufficient: Existing security controls (authentication, authorization, logging) provide foundation but don’t address cascade-specific dynamics in agentic AI

- Defense requires multiple independent layers: Architectural isolation, runtime verification, and observability each address different cascading failure vectors — all three are needed for agentic AI.

- Speed is the adversary: Cascading failures operate at machine speed; detection and response mechanisms must match this tempo in agentic AI.

- Human-in-the-loop is not a panacea: Cascading failures can overwhelm human operators or occur too quickly for human intervention. Automated circuit breakers are essential

8.2 The path forward for agentic AI security

Agentic AI promises unprecedented automation and capability. Realizing this promise safely requires taking cascading failure risks seriously — not as theoretical concerns but as operational realities that demand engineering discipline, organizational commitment, and continuous vigilance

The frameworks, patterns, and techniques in this guide provide a foundation for cascade-resilient agentic AI systems. But the field is evolving rapidly, and the security community must continue to develop and share knowledge about cascading failures as agentic AI deployments scale.

9. Resources and references for agentic AI security

9.1 OWASP agentic AI security resources

- OWASP Top 10 for Agentic Applications: https://owasp.org/www-project-top-10-for-large-language-model-applications/

- OWASP GenAI Security Project: https://genai.owasp.org/

- OWASP Agentic AI Threats and Mitigations Guide: https://owasp.org/www-project-top-10-for-large-language-model-applications/initiatives/agent_security_initiative/

- OWASP AIVSS (AI Vulnerability Scoring System): https://genai.owasp.org/aivss/

- OWASP LLM Top 10 (2025): https://owasp.org/www-project-top-10-for-large-language-model-applications/

9.2 Industry standards for agentic AI and cascading failure prevention

- NIST AI Risk Management Framework: https://www.nist.gov/itl/ai-risk-management-framework

- ISO/IEC 42001:2023 – AI Management System: https://www.iso.org/standard/81230.html

- MITRE ATLAS (Adversarial Threat Landscape for AI Systems): https://atlas.mitre.org/

- Google SRE Book – Addressing Cascading Failures: https://sre.google/sre-book/addressing-cascading-failures/

- CWE-400 (Uncontrolled Resource Consumption): https://cwe.mitre.org/data/definitions/400.html

9.3 Academic research on agentic AI cascading failures

- Multi-Agent Systems Execute Arbitrary Malicious Code (arXiv 2503.12188): https://arxiv.org/abs/2503.12188

- Preventing Rogue Agents Improves Multi-Agent Collaboration (arXiv 2502.05986): https://arxiv.org/abs/2502.05986

- Securing Agentic AI: A Comprehensive Threat Model and Mitigation Framework (arXiv 2504.19956): https://arxiv.org/pdf/2504.19956

Appendix A: cascade failures detection checklist for agentic AI

Use this OWASP-aligned checklist for regular cascading failure risk assessment in agentic AI:

Architecture review for agentic AI cascade prevention

- ☐ Are trust boundaries explicitly defined between agentic AI agents ?

- ☐ Do agentic AI agents have scoped, minimal credentials to limit cascading failures?

- ☐ Are circuit breakers implemented at key cascade propagation points?

- ☐ Can agentic AI agents be independently halted without system-wide impact?

Runtime controls for cascading failure prevention

- ☐ Is there separation between planning and execution in agentic AI?

- ☐ Are critical actions validated by independent agents or systems to prevent cascading failures?

- ☐ Are rate limits enforced per-agent and per-session?

- ☐ Do high-risk agentic AI actions require human confirmation?

Observability for agentic AI cascade detection

- ☐ Are all agentic AI actions logged to immutable external storage?

- ☐ Is distributed tracing implemented across agent interactions for cascade detection?

- ☐ Are cascading failure pattern detectors deployed and tuned ?

- ☐ Can you reconstruct the full cascade path from logs?

Response capability for agentic AI cascading failures

- ☐ Does an emergency halt mechanism exist for agentic AI and is it tested?

- ☐ Can you rollback agentic AI state to known-good snapshots after cascading failures?

- ☐ Is there a graduated response playbook for cascade incidents?

- ☐ Has the team practiced cascading failure incident response?

Frequently asked questions: cascading failures in agentic AI

What is a cascading failure in agentic AI?

A cascading failure in agentic AI occurs when a single fault — such as a hallucination, prompt injection, or corrupted data — propagates across multiple autonomous AI agents, amplifying into system-wide harm. Unlike traditional software errors that stay contained, agentic AI cascading failures multiply through agent-to-agent communication, shared memory, and feedback loops.

What is OWASP ASI08 for agentic AI?

OWASP ASI08 is the OWASP classification for Cascading Failures in agentic AI applications. It focuses on how faults propagate and amplify across agents, sessions, or workflows, causing systemic impact beyond the original breach. ASI08 is part of the OWASP Top 10 for Agentic Applications.

How do you prevent cascading failures in agentic AI systems?

Preventing cascading failures in agentic AI requires a defense-in-depth approach: (1) Architectural isolation with trust boundaries and circuit breakers, (2) Runtime verification with multi-agent consensus and ground truth validation, and (3) Comprehensive observability with automated cascade pattern detection and kill switches.

What is the relationship between OWASP LLM Top 10 and agentic AI cascading failures?

Cascading failures in agentic AI often originate from vulnerabilities identified in the OWASP LLM Top 10, including LLM01 (Prompt Injection), LLM04 (Data & Model Poisoning), and LLM06 (Excessive Agency). These initial faults become cascading failures when they propagate through multi-agent agentic AI systems.

Why are cascading failures more dangerous in agentic AI than traditional systems?

Agentic AI cascading failures are more dangerous due to three factors: (1) Semantic opacity — natural language errors pass validation checks, (2) Emergent behavior — multiple agents create unintended outcomes, and (3) Temporal compounding — errors persist in agentic AI memory and contaminate future operations,.