Part 3 of the Red Teaming Agentic AI Series: WHO is involved

TL;DR

- “WHO” in agent red teaming means two things: who are you simulating (threat actors) and who is doing the testing (your red team). Most organizations get both wrong.

- The adversary who compromises your agent won’t look like a prompt hacker. They’ll look like a patient insider, a competitive intelligence bot, or a supply chain infiltrator.

- A red team of prompt engineers testing agentic AI is like a locksmith auditing a nuclear facility. Right profession, wrong specialization entirely.

- Agent red teaming requires five distinct expertise domains. Most teams have one or two.

The wrong adversary problem

Ask your red team who they’re simulating when they test your AI agent. If they answer “an attacker trying to jailbreak it”, you’ve already lost. The adversary who compromises your agentic system won’t interact with the chat interface at all. They’ll poison a document in your knowledge base. They’ll compromise an API your agent calls. They’ll embed instructions in calendar invitations your assistant processes automatically.

The jailbreak hacker is real, but they’re the least dangerous threat actor in agentic systems. And the only one most red teams simulate.

Your red team stress-tests the front door with every lock-picking technique known to humanity. The actual attacker walks through the loading dock wearing a vendor badge.

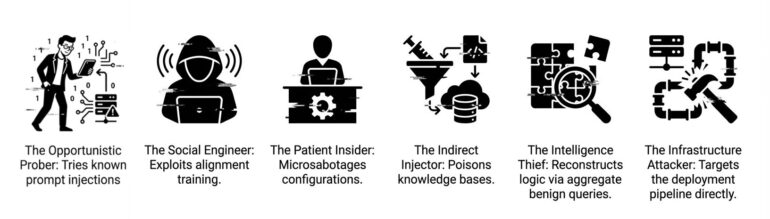

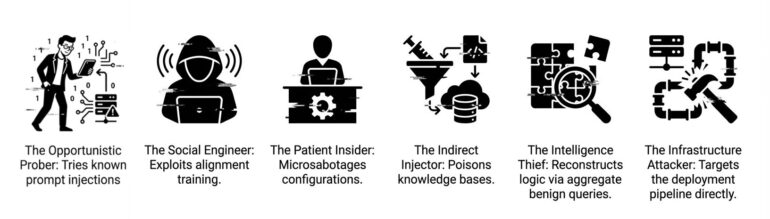

The six threat actors your red team must simulate

Each actor targets different surfaces and demands different testing approaches.

Actor 1: The opportunistic prober

Profile: Low skill, high volume. Script kiddies, automated scanners.

Targets: User input interface, basic tool execution.

Method: Known prompt injection templates, public jailbreak libraries, brute-force fuzzing.

Why simulate: Establishes your security floor. Also the only actor most vendors test against.

Actor 2: The social engineer

Profile: Medium skill, high creativity. Exploits the agent’s helpfulness training, not its code.

Targets: Reasoning module, user input interface, output processing.

Method: Elaborate fictional contexts making harmful requests seem legitimate. Convinces the agent it’s participating in authorized testing or research.

Why simulate: Agents are trained to be helpful. That training is exploitable. Social engineering exploits alignment, not vulnerabilities.

Actor 3: The patient insider

Profile: Medium skill, high access, unlimited patience. Disgruntled employee, compromised contractor.

Targets: Configuration layer, memory systems, tool execution.

Method: Small, legitimate-looking changes over weeks. A permission flag here, a knowledge base document there. Each passes review. The cumulative effect opens a breach path months later.

Why simulate: Insiders exploit trust. Each individual action is authorized and not suspicious for real-time monitoring. Red teams rarely simulate multi-week campaigns, but real insiders operate on exactly this timeline.

Actor 4: The indirect injector

Profile: Medium-high skill, no direct access needed. Poisons data the agent consumes.

Targets: External data sources, memory systems, tool execution (response injection).

Method: Embeds malicious instructions in web pages, emails, API responses, or documents the agent will process. Never interacts with the agent directly.

Why simulate: This actor never touches your perimeter. They attack upstream, and your agent fetches the poison voluntarily. Testing requires mapping every external dependency and poisoning them systematically, different from prompt testing.

Actor 5: The competitive intelligence thief

Profile: High skill, high patience, plausible deniability. Corporate espionage, market intelligence.

Targets: Output processing, reasoning module, memory systems.

Method: Thousands of legitimate queries aggregated to reconstruct proprietary logic. No single query violates policy. The attack is in the pattern.

Why simulate: Each interaction passes every safety check. Harm is invisible individually but catastrophic in aggregate. To emulate this threat, red teams must move beyond conversations and think in datasets.

Actor 6: The infrastructure attacker

Profile: Expert skill, advanced persistent threat. Nation-state actors, sophisticated criminal organizations.

Targets: Configuration layer, inter-agent communication, orchestration, tool execution.

Method: Supply chain compromise, deployment pipeline infiltration, model poisoning, inter-agent protocol exploitation. Attacks the infrastructure, not the agents.

Why simulate: This actor bypasses every agent-level defense by compromising the foundation. Testing requires infrastructure security expertise AI-focused red teams rarely possess.

The five expertise domains (and why you probably have one)

Simulating six threat actors requires five distinct expertise domains. Most “AI red teams” staff one. Here’s what each actually requires:

Domain 1: Prompt & social engineering

Input manipulation, jailbreaks, role-play exploitation, multi-turn deception campaigns.

Domain 2: Application security

Tool parameter injection, API exploitation, data flow analysis, authentication bypass, session manipulation.

Domain 3: Architecture & distributed systems

Orchestration hijacking, state corruption, race conditions, inter-agent trust exploitation, control flow manipulation.

Domain 4: Data & ML security

This is where most teams reveal critical gaps. Real ML security expertise means:

- Adversarial attacks on images: Perturbation attacks, patch attacks, typographic attacks that manipulate agent perception

- Token-level manipulations: GCG (Greedy Coordinate Gradient) attacks, universal adversarial suffixes, embedding-space exploits

- Neural network evaluation: Understanding attention patterns, activation analysis, probing classifier boundaries

- RAG-specific attacks: Embedding poisoning, retrieval manipulation, chunk boundary exploitation

- Model supply chain: Training data poisoning, fine-tuning backdoors, model serialization attacks

This is not “prompt engineering with extra steps”, but adversarial machine learning, a discipline most AI security vendors have never practiced.

Domain 5: Business logic & domain expertise

Goal misalignment detection, competitive extraction patterns, regulatory boundary violations, domain-specific harm scenarios.

The coverage gap: Who can test what

Here’s the uncomfortable math. Different team types cover different percentages of your actual threat surface:

| Team Type |

Domains Covered |

Actors Simulated |

Coverage |

| Chatbot red team |

Prompt engineering only |

Actor 1, partial Actor 2 |

~20% |

| Traditional AppSec |

AppSec + partial social eng |

Actors 1-2, partial Actor 4 |

~30% |

| Top boutique security |

AppSec + architecture + partial prompt |

Actors 1-4, partial Actor 6 |

~50% |

| Full agent red team |

All 5 domains integrated |

All 6 actors |

90%+ |

Chatbot red teams test prompt manipulation. They catch the opportunistic prober and maybe spot obvious social engineering. Coverage: ~20%

Traditional AppSec teams add tool chain and API exploitation but don’t understand agent memory, planning, or ML-specific attacks. Coverage: ~30%

Top boutique firms combine AppSec with architecture. They test orchestration and state management but miss adversarial ML and competitive extraction. Coverage: ~50%

Full agent red teams integrate all five domains across all six actors. Perhaps a dozen firms globally can credibly claim this. Coverage: 90%+

A red team without these capabilities isn’t finding your real vulnerabilities. It’s generating a report that makes everyone feel safe while 70-80% of your attack surface remains untested.

The three-layer team structure

Strategic layer: Threat intelligence mapping adversary behavior to your deployment. Business risk quantification. Compliance alignment.

Tactical layer: Agent architecture specialists, AppSec researchers, adversarial ML experts, domain specialists.

Operational layer: Autonomous Red Teaming Agents (ARTAs), fuzzing infrastructure, continuous monitoring.

Most organizations need all three. Most have fragments of the tactical layer, and only the prompt engineering fragment.

Your next actions

1. Audit your adversary model: Ask which threat actors your team simulates. “Prompt injection attacker” only means they’re testing one of six.

2. Map expertise to domains: Cross-reference your red team’s skills against the five domains. Gaps are almost certainly in AppSec, architecture, and ML security.

3. Staff the tactical layer first: One senior architect who understands agent internals changes everything.

4. Simulate patience: Require at least one multi-week campaign. Single-session testing misses every insider threat.

Next: Part 4 — WHEN: The temporal dimension of agent attacks

Previous parts: Part 1, Part 2.

Agentic AI Red Teaming Platform

Are you sure your agents are secured?

Let's try!